This article details how Pinterest scaled its recommendation system to leverage vast lifelong user data, significantly improving personalization and user engagement through innovative ML models and efficient serving infrastructure.

Xue Xia | Machine Learning Engineer, Home Feed Ranking; Saurabh Vishwas Joshi | Principal Engineer, ML Platform; Kousik Rajesh | Machine Learning Engineer, Applied Science; Kangnan Li | Machine Learning Engineer, Core ML Infrastructure; Yangyi Lu | Machine Learning Engineer, Home Feed Ranking; Nikil Pancha | (formerly) Machine Learning Engineer, Applied Science; Dhruvil Deven Badani | Engineering Manager, Home Feed Ranking; Jiajing Xu | Engineering Manager, Applied Science; Pong Eksombatchai | Principal Machine Learning Engineer, Applied Science

The Pinterest home feed is crucial for Pinner engagement and discovery. Pins on the home feed are personalized using a two-stage process: initial retrieval of candidate pins based on user interests, followed by ranking with the home feed (Pinnability) model. This model — a neural network consuming various pin, context, and user signals — predicts personalized pin relevance to improve user experience. Its architecture is illustrated in the following figure.

In 2023, we published TransAct (arXiv), where we use transformers to model the Pinner’s last 100 actions in Pinnability. One shortcoming of that approach is that we are unable to model the user’s lifelong behavior on Pinterest.

Today, we will talk about solving that. We do this through TransActV2, which features three key innovations:

Please reference our PDF on arXiv (link) for a thorough technical deep dive on TransActV2.

Why care about a user’s actions from weeks, months, or even years ago?

TransActV2 addresses these fundamental challenges and unlocks a new frontier for lifelong, real-time personalization at Pinterest.

TransActV2 introduces several technical advances:

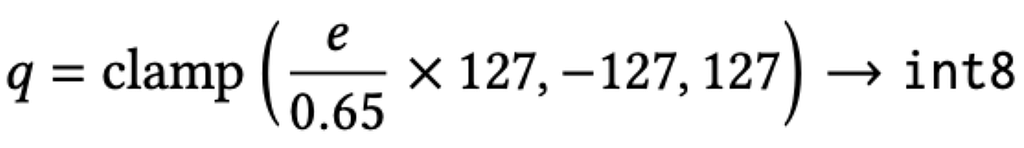

Formally, the lifelong (LL) sequence S_{LL} is defined for each user as a chronologically-ordered series of tokens, where each token contains the above features. To reduce storage, the PinSage vector e (32-dim float16) is quantized:

Nearest Neighbor (NN) Selection:

At ranking time, most user sequences are still far longer than any single pin’s context requires. TransActV2 solves this by only selecting the following for each candidate pin c:

Mathematically:

Where NN(S,c) selects actions in S most similar to c, using PinSage embeddings EPinSage. The final user sequence fed to the model is:

⊕ denotes concatenation; Sʀᴛ is the real-time sequence and sᵢₘₚ the impression sequence.

The model is a multi-headed, point-wise multi-task network over a wide & deep stack:

The model is a multi-headed, point-wise multi-task network over a wide & deep stack:

2. Transformer Encoder: Two-layer self-attention, one attention head per layer, model dimension 64, with feedforward 32-dim sublayers. Causal masking is enforced so the model cannot “peek ahead.”

3. Downstream Heads: Output tensor is pooled (max pooling + linear layer). Feature crossing and MLP layers create the outputs for various actions (e.g., click, repin, hide).

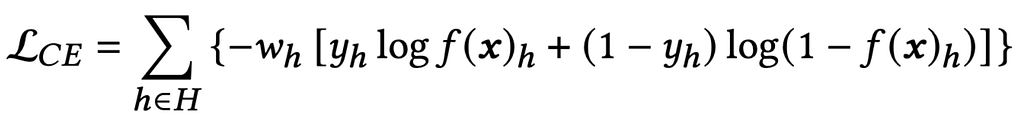

Traditional Click-Through-Rate (CTR) models use cross-entropy loss:

Where H is the set of action heads. f(x)h is the predicted probability for head h.

TransActV2 introduces the Next Action Loss (NAL) as an auxiliary task. This challenges the model not just to predict probability of engagement, but — given today’s context and history — what will the user do next?

For the user representation at time step t [represented as u(t)], and the positive pin embedding at t+1 [represented as pu(t+1)], the NAL is:

Where ⟨⋅,⋅⟩ is inner product and nu are negative (non-engaged) samples. The loss is combined with the main ranking loss:

Negative Sampling:

Impression-based negative samples (pins shown but not engaged) are much more effective than random negative sampling, improving both repin accuracy and reducing inappropriate content recommendations. Offline, impression-based NAL improved top-3 hit rates by over 1% and reduced negative events by over 2%.

Handling lifelong sequences in production brings unique systems challenges:

Naive approach: O(NL) data movement for L=10,000+ sequence tokens and N candidate items per ranking request.

Solution:

Fused Transformer (SKUT):

Pinned Memory Arena:

Request-level de-duplication:

Results:

Stacked together, these changes yielded 75–81% lower p99 model run latency at every batch size evaluated. End-to-end inference latency decreased by 103–338x (p50–p99) compared to baseline.

At Pinterest’s scale with more than 570 million monthly active users (MAU)¹, even a fractional percent improvement translates to millions more meaningful engagements. Prior to TransActV2, offline and online lifts for home feed ranking models were typically incremental; most established deep learning recommenders see monthly gains on the order of 0.1 to 0.3%. The step change we saw provided by TransActV2 was exceptional.

By leveraging lifelong sequences and Next Action Loss with impression-based negatives, TransActV2 achieves the largest jumps in offline metrics ever recorded in our production pipeline. Compared to prior systems (TransAct V1 and production BST), TransActV2 (RT + LL + NALimp) achieves:

In a real-world A/B test on 1.5% of home feed traffic (representing millions of users):

To put this in perspective: previous model launches over the past two years typically achieved lifts around 0.2–1%. This means TransActV2’s gain is up to an order of magnitude larger.

All key metrics in both offline and online evaluation were found statistically significant (p < 0.01) and persistent over multiple test runs and surfaces.

TransActV2 sets a new benchmark for lifelong user sequence modeling in online industrial recommender systems:

This work represents a joint leap in deep learning, recommendation theory, and large-scale engineering. The future of home feed personalization is ever more dynamic, diverse, and rewarding for millions of Pinterest users.

¹ Pinterest analysis, global, Q1 2025

Next-Level Personalization: How 16k+ Lifelong User Actions Supercharge Pinterest’s Recommendations was originally published in Pinterest Engineering Blog on Medium, where people are continuing the conversation by highlighting and responding to this story.

Continue reading on the original blog to support the author

Read full articleIt demonstrates how to scale multimodal LLMs for production by combining expensive VLM extraction with efficient dual-encoder retrieval. This architecture allows platforms to organize billions of items into searchable collections while maintaining high precision and low operational costs.

This article demonstrates how to significantly accelerate ML development and deployment by leveraging Ray for end-to-end data pipelines. Engineers can learn to build more efficient, scalable, and faster ML iteration systems, reducing costs and time-to-market for new features.

Optimizing for sparse conversion events is a major challenge in ad tech. This architecture shows how to effectively combine sparse labels with dense engagement signals using parallel DCN v2 and multi-task learning to drive significant business value and advertiser RoAS.

Redundant processing of duplicate URLs wastes massive computational resources. This automated, data-driven approach to normalization reduces infrastructure costs and improves data quality by identifying content identity before expensive rendering or ingestion steps occur.