This article details how to build secure, privacy-preserving enterprise search and AI features. It offers a blueprint for integrating external data without compromising user data, leveraging RAG, federated search, and strict access controls. Essential for engineers building secure data platforms.

Many don’t know that “Slack” is in fact a backronym—it stands for “Searchable Log of all Communication and Knowledge”. And these days, it’s not just a searchable log: with Slack AI, Slack is now an intelligent log, leveraging the latest in generative AI to securely surface powerful, time-saving insights. We built Slack AI from the ground up to be secure and private following principles that mirror our existing enterprise grade compliance standards:

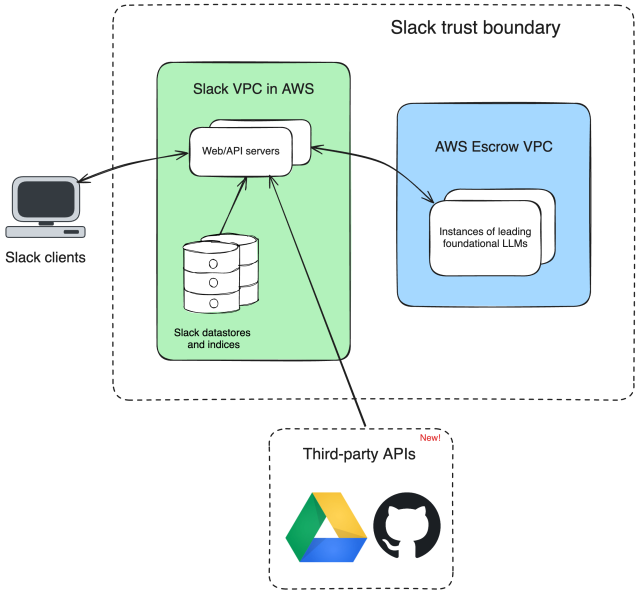

Now, with enterprise search, Slack is more than a log of all content and knowledge inside Slack—it also includes knowledge from your key applications. Users can now surface up-to-date, relevant content that is permissioned to them directly in Slack’s search. We’re starting with Google Drive and GitHub, and you’ll see many more of your connected apps as the year goes on. With these new apps, Slack search and AI Answers are all the more powerful, pulling in context from across key tools to satisfy your queries.

We built enterprise search to uphold the same Enterprise-grade security and privacy standards as Slack AI:

This blog post will explain how these principles guided the architecture of enterprise search.

First, a refresher: how does Slack AI uphold our security principles?

Enterprise search is built atop Slack AI and benefits from many of the innovations we developed for Slack AI. We use the same LLMs in the same escrow VPC; we use RAG to avoid training LLMs on user data; and we don’t store Search Answers in the database (whether or not they contain external content). However, enterprise search adds a new twist. We can now provide permissioned content from external sources to the LLM and in your search results.

When developing enterprise search, we decided not to store external source data in our database. Instead, we opted for a federated, real-time approach. Building atop Slack’s app platform, we use public search APIs from our partners to return the most up-to-date, permissioned results for a given user. Note that the Slack client may cache data between reloads to performantly serve product features like filtering and previews.

When searching for external data, it’s essential that we only fetch data which the user can access in the external system (this mirrors our Slack AI principle #3, “Slack AI only operates on the data that the user can already see”) and that this data is up to date.

Using a real-time instead of an index-based approach helps us uphold this principle. Because we’re always fetching data from external sources in response to a user query, we never risk that data getting stale. There’s nothing stored on our side, so staleness simply isn’t possible.

But how do we scope down queries to just data that the querying user can access in the external system? The Slack platform already provides powerful primitives for connecting external systems to Slack, chief among them being OAuth. The OAuth protocol allows a user to securely authorize Slack to take agreed-upon actions on their behalf, like reading files the user can access in the external system. By leveraging OAuth, we ensure that enterprise search can never perform an action the user did not authorize the system to perform in the external system, and that the actions we perform are a subset of those the user could themselves perform.

We believe that your external data should be yours to control. As such, Slack admins must opt in each external source for use in their organization’s search results and Search Answers. They can also revoke this access (for both search results and Search Answers) at any time.

Next, Slack users also explicitly grant access before we integrate any external sources in their search. Users may also revoke access to any source at any time. This level of control is possible due to the OAuth-based approach mentioned above.

An important security principle is that a system should never request more privileges than it requires. For enterprise search, this means that when we connect to an external system, we only request the OAuth scopes which are necessary to satisfy search queries—specifically read scopes.

Not only do we adhere to the principle of least privilege, we show admins and end users the scopes we plan to request when they enable an external source for use in enterprise search. This means that admins and end users always know which authorizations Slack requires to integrate with an external source.

At Salesforce, trust is our #1 value. We’re proud to have built an enterprise search experience that puts security and privacy front and center, building atop the robust security principles already instilled by Slack AI. We’re excited to see how our customers use this powerful new functionality, secure in the knowledge that their external data is always in good hands.

Continue reading on the original blog to support the author

Read full articleManaging context in long-run agentic systems is critical as context windows fill and performance degrades. This architecture shows how to use structured memory and specialized agent roles to maintain coherence and accuracy across complex, multi-step workflows.

As HTTP/3 and QUIC become standard, legacy monitoring tools often fail to provide visibility into UDP-based traffic. Open-sourcing these capabilities into Prometheus BBE enables engineers to monitor modern network protocols without relying on fragmented or proprietary solutions.

Scaling notification systems requires balancing high-volume delivery with user cognitive load. Slack's rebuild demonstrates how architectural simplification and cross-platform consistency reduce technical debt and improve UX by making complex systems predictable.

This article details how Slack built robust AI agent systems for security investigations by moving from single prompts to chained, structured model invocations, offering a blueprint for reliable AI application development.