This article highlights how Azure Local provides engineers with flexible, sovereign, and resilient cloud capabilities on-premises or at the edge. It enables deploying AI and critical workloads while meeting strict compliance and operational autonomy requirements, even in disconnected environments.

Organizations running mission‑critical workloads operate under stricter standards because system failures can often affect people and business operations at scale. They must ensure control, resilience, and operational autonomy such that innovation does not compromise governance. They need agility that also maintains continuity and preserves standards compliance, so they can get the most out of AI, scalable compute, and advanced analytics on their terms.

For example, manufacturing plants need assembly lines to continue to operate during network outages, and healthcare providers need the ability to access patient data during natural disasters. Similarly, government agencies and critical infrastructure operators must comply with regulations to keep systems autonomous and data within national borders. Additionally, regulations sometimes mandate that sensitive data remains stored and processed locally under local jurisdiction and personnel control.

These are exactly the challenges Azure’s adaptive cloud approach is designed to solve. We are extending Azure public regions with options that adapt to our customers’ evolving business requirements without forcing trade-offs. Microsoft’s strategy spans both our public cloud, private cloud, and edge technology, giving customers a unified platform for operations, applications, and data with the right balance of flexibility and control. This approach empowers customers to use Azure services to innovate in environments under their full control, rather than maintaining separate, siloed, or legacy IT systems.

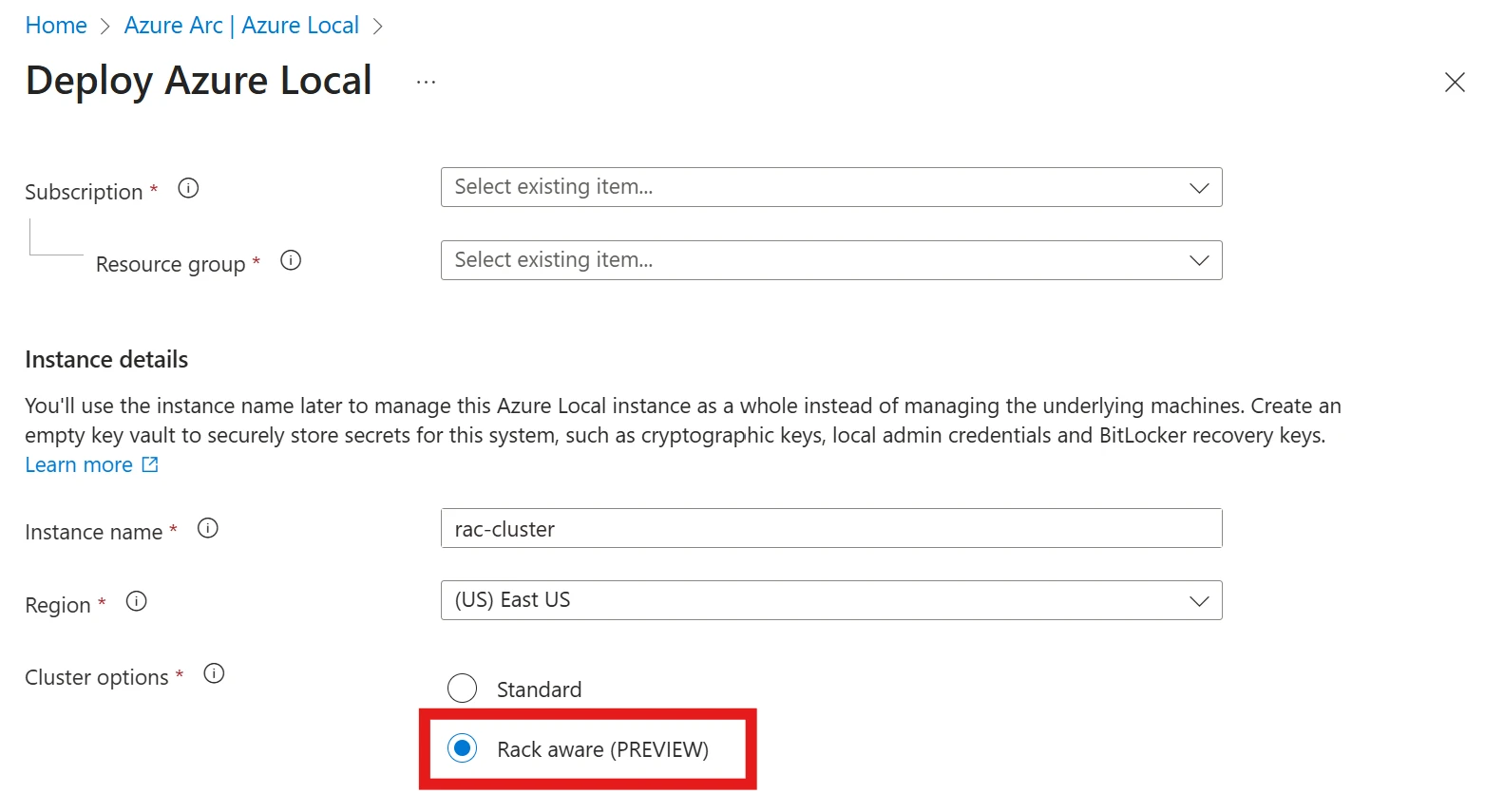

To address unique operational and data sovereignty needs, Microsoft introduced Azure Local—Azure infrastructure delivered in customers’ own datacenters or distributed locations. Azure Local comes with integrated compute, storage, and networking services and leverages Azure Arc to extend cloud services across the management, data, application, and security layers into hybrid and disconnected environments.

Over the past six months, our team has significantly expanded Azure Local’s capabilities to meet requirements across industries. We are seeing tremendous momentum from customers like GSK, a global biopharma leader extending cloud innovation and AI to the edge using Azure Local. GSK is enabling real-time data processing and AI inferencing across vaccine and medicine manufacturing and R&D labs worldwide. GSK joined our What’s new in Azure Local session at Ignite last month, offering insight into how they are using Azure Local.

We are also engaging deeply with public sector organizations to ensure essential services can run independent of internet connectivity when needed, from city administrations to defense and emergency response agencies.

To support these customers, we are enabling a growing set of Azure Local features and functionalities across Microsoft and partners, many of which have reached General Availability (GA) and preview.

In short, Azure Local has rapidly evolved into a robust platform for operational sovereignty. It delivers Azure consistency for all workloads from core business apps to AI, in customers’ locations—from a few nodes on a factory floor up to thousands of nodes. These advancements reflect our commitment to meet customers where they are.

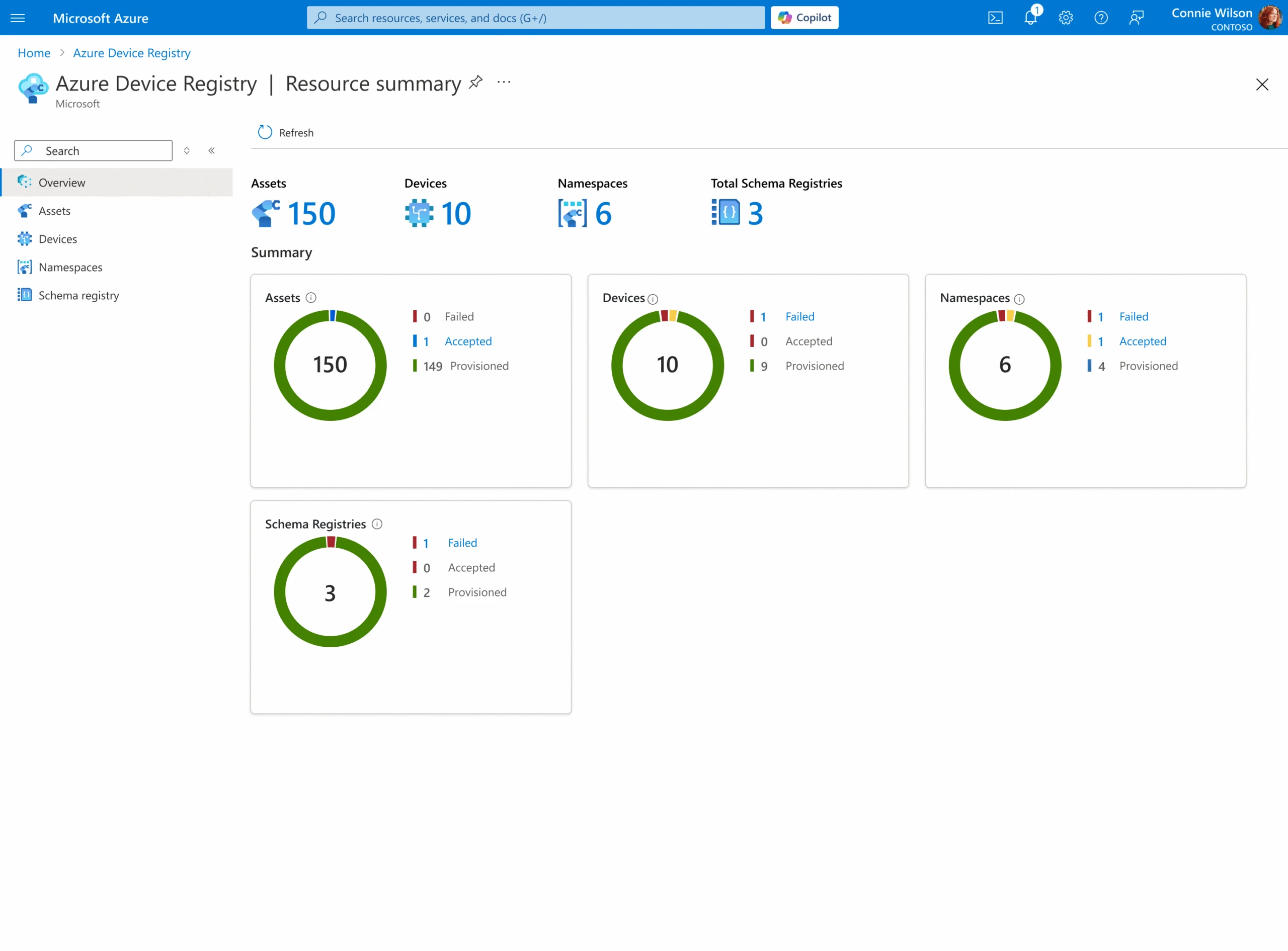

Azure’s adaptive cloud approach helps bring AI to physical operations. Our Azure IoT platform enables asset-intensive organizations to harness data from devices and sensors in a secure, scalable, and resilient fashion. When combined with Microsoft Fabric, customers get real-time insights from their operational data. This integration allows industries such as manufacturing, energy, and industrial operations to bridge digital and physical systems and adopt AI and automation in ways that align with their specific needs.

Our approach to enable AI in physical operations environments follows two basic patterns. Azure IoT Operations enables device and sensor data from larger sites to be aggregated and processed close to its source for near real-time decision-making and reduced latency, streaming only relevant data to Fabric for more advanced analytics. Azure IoT Hub, on the other hand, enables device data to securely flow directly to Fabric with cloud-based identity and security. The integration across Microsoft Fabric and Azure IoT helps bridge Operational Technology (OT) and Information Technology (IT), delivering cost-effective, secure, and repeatable outcomes.

In the last six months, we introduced several enhancements to Azure IoT tailored for connected operations use cases:

Customers like Chevron and Husqvarna are scaling Azure IoT Operations from single-site pilots to multi-site rollouts, unlocking new use cases such as predictive maintenance and worker safety. These deployments demonstrate measurable impact and adaptive cloud architectures delivering business value. Our partner ecosystem is also growing with Siemens, Litmus, Rockwell Automation, and Sight Machine building on the platform.

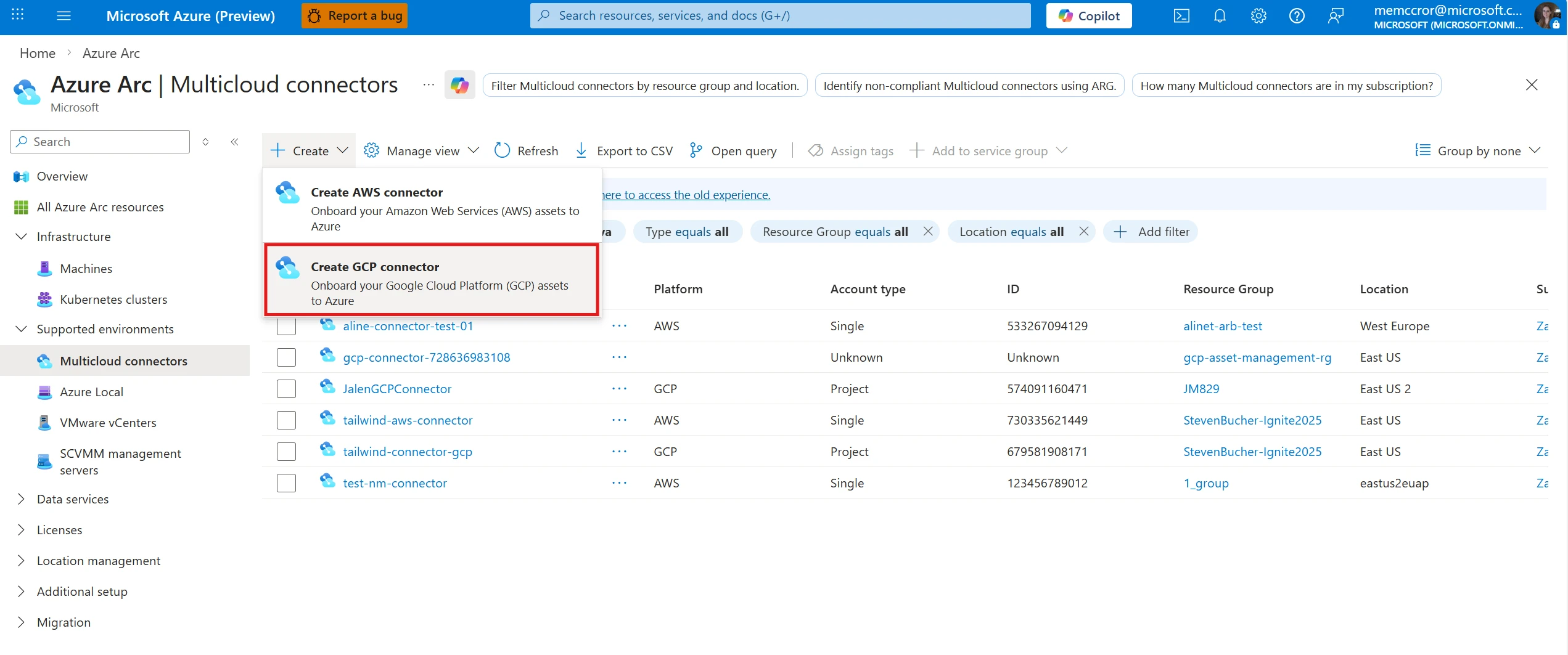

Organizations often grapple with the complexity of highly distributed IT estates—spanning on-premises datacenters, hundreds or sometimes thousands of edge sites, multiple public clouds, and countless devices. Managing and securing this sprawling ecosystem is challenging with traditional tools. A core promise of Azure’s adaptive cloud approach is helping to simplify centralized operations through a single, unified control plane via Azure Arc.

Over the last six months, we have delivered a wave of improvements to help customers manage distributed resources at scale, across heterogenous environments, in a frictionless way. Key enhancements in our Azure Arc platform include:

These enhancements underscore our belief that cloud management and cloud-native application development should not stop at the cloud. Whether an IT team is responsible for five datacenters or 5000 retail sites, Azure provides the tooling to manage that distributed environment and develop applications as one cohesive and adaptive cloud.

Azure’s adaptive cloud approach gives organizations the freedom to innovate on their terms while maintaining control. In an era defined by uncertainty, whether from cyber threats or geopolitical shifts, Azure empowers customers to modernize confidently without sacrificing resiliency or control.

The post New options for AI-powered innovation, resiliency, and control with Microsoft Azure appeared first on Microsoft Azure Blog.

Continue reading on the original blog to support the author

Read full articlePostgreSQL is evolving into a central hub for AI development. By integrating vector search, LLM orchestration, and seamless IDE workflows directly into the managed database service, Microsoft reduces the friction of building and scaling intelligent, data-driven applications.

Azure Storage is shifting from passive storage to an active, AI-optimized platform. Engineers must understand these scale and performance improvements to architect systems capable of handling the high-concurrency, high-throughput demands of autonomous agents and LLM lifecycles.

Microsoft's leadership in AI platforms highlights the transition from experimental LLM demos to production-grade agentic workflows. For engineers, this provides a unified framework for data grounding, multi-agent orchestration, and governance across cloud and edge environments.

This article introduces GPT-5.2 in Microsoft Foundry, a new enterprise AI model designed for complex problem-solving and agentic execution. It offers advanced reasoning, context handling, and robust governance, setting a new standard for reliable and secure AI development in professional settings.