Consolidating fragmented ML models reduces technical debt and operational overhead while boosting performance through shared representations. This case study provides a blueprint for balancing architectural unification with the need for surface-specific specialization in large-scale systems.

Authors: Duna Zhan | Machine Learning Engineer II; Qifei Shen | Senior Staff Machine Learning Engineer; Matt Meng | Staff Machine Learning Engineer; Jiacheng Li | Machine Learning Engineer II; Hongda Shen | Staff Machine Learning Engineer

Pinterest ads show up across multiple product surfaces, such as the Home Feed, Search, and Related Pins. Each surface has different user intent and different feature availability, but they all rely on the same core capability: predicting how likely a user is to engage with an ad.

Before this project, the ads engagement stack relied on three independent production models, one per surface. Although the models were initially derived from a similar design, they diverged over time in several core components, including user sequence modeling, feature crossing modules, feature representations, and training configurations. This fragmentation led to persistent operational and modeling inefficiencies:

These challenges motivated the development of a unified engagement framework to gradually consolidate surface-specific models while retaining the flexibility needed for each surface.

In this post, we present our approach to unifying two previously separate engagement models into a single architecture with surface-specific calibration and lightweight surface-specialized components. We also describe several efficiency optimizations such as projection layers and request-level broadcasting, which reduce infrastructure costs. Overall, the unified model not only resolves the iteration, cost, and maintenance issues described above, but also strengthens representation learning by combining complementary features and modeling choices across surfaces, leading to significant online metric improvements.

We treated model unification as a major architectural change and followed three principles to avoid common failure modes:

We also set explicit milestones based on serving constraints. Since the cost of Related Pins (RP), Home Feed (HF), and Search (SR) differ substantially, we first unified Home Feed and Search (similar CUDA throughput characteristics) and expanded to Related Pins only after throughput and efficiency work stabilized.

As a first step, we built a baseline unified model by:

This baseline delivered promising offline improvements, but it also materially increased training and serving cost. As a result, additional iterations were required before the model was production-ready.

Because RP had a substantially higher cost profile, we focused next on unifying HF and SR. We incorporated key architectural elements from each surface such as MMoE [1] and long user sequences [2]. When applied in isolation (e.g., MMoE on HF alone, or long sequence Transformers on SR alone), these changes did not produce consistent gains, or the gain and cost trade-off was not favorable. However, when we integrated these components into a single unified model and expanded training to leverage combined HF+SR features and multi-surface training data, we observed stronger improvements with a more reasonable cost profile.

The diagram below shows the final target architecture: a single unified model that serves three surfaces, while still supporting the development of surface-specific modules (for example, surface-specific tower trees and late fusion with surface-specific modules within those tower trees). During serving, each surface-specific tower tree and its associated modules will handle only that surface’s traffic, avoiding unnecessary compute cost from modules that don’t benefit other surfaces. As a first step, the unified model currently includes only the HF and SR tower trees.

Since the unified model serves both HF and SR traffic, calibration is critical for CTR prediction. We found that a single global calibration layer could be suboptimal because it implicitly mixes traffic distributions across surfaces.

To address this, we introduced a view type specific calibration layer, which calibrates HF and SR traffic separately. Online experiments showed this approach improved performance compared to the original shared calibration.

Using a single shared architecture for HF and SR CTR prediction limited flexibility and made it harder to iterate on surface-specific features and modules. To restore extensibility, we introduced a multi-task learning design within the unified model and enabled surface-specific checkpoint exports. We exported separate surface checkpoints so each surface could adopt the most appropriate architecture while still benefiting from shared representation learning.

This enabled more flexible, surface-specific CTR prediction and established a foundation for continued surface-specific iteration.

Infrastructure cost is mainly driven by traffic and per-request compute, so unifying models does not automatically reduce infra spend. In our case, early unified versions actually increased latency because merging feature maps and modules made the model larger. To address this issue, we paired it with targeted efficiency work.

We simplified the expensive compute paths by using DCNv2 to project the Transformer outputs into a smaller representation before downstream crossing and tower tree layers, which reduced serving latency while preserving signal. We also enabled fused kernel embedding to improve the inference latency and TF32 to speed up training speed.

On the serving side, we reduced redundant embedding table look up work with request-level broadcasting. Instead of repeating heavy user embedding lookups for every candidate/request in a batch, we fetch embeddings once per unique user and then broadcast them back to the original request layout, keeping model inputs and outputs unchanged. The main trade-off is an upper bound on the number of unique users per batch; if exceeded, the request can fail, so we used the tested unique user number to keep the system reliable.

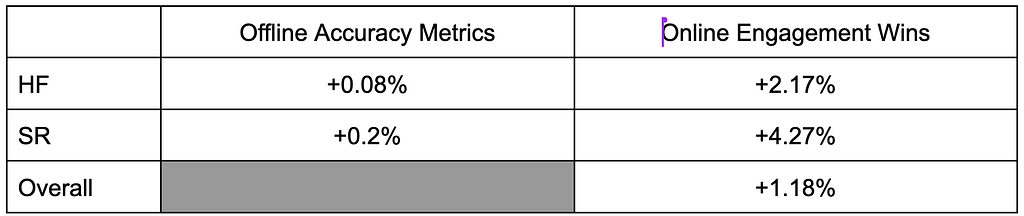

In offline experiments, we observed improvements across HF and SR, and validated the performance gains by online experiments. As shown in the table below, we observed significant improvements on both online and offline metrics [3].

Unifying ads engagement modeling isn’t simply a matter of replacing three separate models with one. The real objective is to build a single, cohesive framework that can share learning wherever it reliably generalizes across surfaces, while still making room for surface-specific features and behavioral nuances when they genuinely matter. At the same time, the framework has to remain efficient enough to serve at scale. Ultimately, by consolidating the core approach and eliminating repeated effort, we reduce duplicated work and put ourselves in a position to ship improvements faster and more consistently.

In the next milestone, we plan to unify the RP surface for the engagement model to create a more consistent experience and consolidate the model. The primary challenge will be model efficiency, so we will integrate additional efficiency improvements to meet our performance targets and achieve this goal.

This work represents a result of collaboration of the ads ranking team members and across multiple teams at Pinterest.

Engineering Teams:

[1] Li, Jiacheng, et al. “Multi-gate-Mixture-of-Experts (MMoE) model architecture and knowledge distillation in Ads Engagement modeling development”. Pinterest Engineering Blog.

[2] Lei, Yulin, et al. “User Action Sequence Modeling for Pinterest Ads Engagement Modeling”. Pinterest Engineering Blog.

[3] Pinterest Internal Data, US, 2025.

Unifying Ads Engagement Modeling Across Pinterest Surfaces was originally published in Pinterest Engineering Blog on Medium, where people are continuing the conversation by highlighting and responding to this story.

Continue reading on the original blog to support the author

Read full articleOptimizing for sparse conversion events is a major challenge in ad tech. This architecture shows how to effectively combine sparse labels with dense engagement signals using parallel DCN v2 and multi-task learning to drive significant business value and advertiser RoAS.

Scaling ML models often leads to exponential costs. This approach demonstrates how architectural changes like request-level deduplication and SyncBatchNorm can decouple model complexity from infrastructure overhead, enabling massive scale-ups without proportional cost increases.

This article demonstrates how moving from heuristic-heavy re-ranking to sophisticated algorithms like SSD improves both system performance and long-term user retention. It highlights the importance of balancing immediate clicks with content diversity in large-scale recommendation engines.

This architecture demonstrates how to scale AI agent capabilities securely in an enterprise environment. By standardizing tool access via MCP and a central registry, Pinterest enables safe, automated engineering workflows while maintaining strict governance and security controls.