This article details how Pinterest uses advanced ML and LLMs to understand complex user intent, moving beyond simple recommendations to goal-oriented assistance. It offers a practical blueprint for building robust, extensible recommendation systems from limited initial data.

Lin Zhu | Sr. Staff Machine Learning Engineer

Jaewon Yang | Principal Machine Learning Engineer

Ravi Kiran Holur Vijay | Director, Machine Learning Engineering

Pinterest has always been a go-to destination for inspiration, a place where users explore everything from daily meal ideas to major life events like planning a wedding or renovating a home. Our core mission is to be an inspiration-to-realization platform. To fulfill this, we recognized a critical challenge: we needed to move beyond understanding immediate interests and comprehend the underlying, long-term goals of our users. Therefore, we introduce user journeys as the foundation for recommendations.

We define a journey as the intersection of a user’s interests, intent, and context at a specific point in time. A user journey is a sequence of user-item interactions, often spanning multiple sessions, that centers on a particular interest and reveals a clear intent — such as exploring trends or making a purchase. For example, a journey might involve an interest in “summer dresses,” an intent to “learn what’s in style,” and a context of being “ready to buy.” Users can have multiple, sometimes overlapping, journeys occurring simultaneously as their interests and goals evolve.

Inferring user journeys goes beyond understanding immediate interests, it allows us to comprehend the underlying, long-term goals of our users. By identifying user journeys, we can move from simple content recommendations to becoming a platform that assists users in achieving their goals, whether it’s planning a wedding, renovating a kitchen, or learning a new skill. This aligns with Pinterest’s mission to be an inspiration-to-realization platform, and provides the foundation for journey-aware recommendations.

From the outset, we knew we were building a new product without large amounts of training data. This constraint shaped our engineering philosophy for this project:

To identify these journeys, we evaluated two primary approaches:

We chose the Dynamic Extraction approach to generate journeys based on the user’s information. It offered greater flexibility, personalization, and adaptability, allowing the system to respond to emerging trends and unique user behaviors. This method also allowed us to leverage existing infrastructure and simplify the modeling process by focusing on clustering activities for individual users.

At a high level, we extract keywords from multiple sources and employ hierarchical clustering to generate keyword clusters; each cluster is a journey candidate. We then build specialized models for journey ranking, stage prediction, naming, and expansion. This inference pipeline runs on a streaming system, allowing us to run full inference if there’s algorithm change, or daily incremental inference for recent active users so the journeys respond quickly to a user’s most recent activities.

Let’s break down the key components of this innovative system:

This foundational component is designed to generate fresh, personalized journeys for each user.

Clear and intuitive journey names are crucial for user experience.

To ensure the most relevant journeys are presented, and to prevent monotony, we built a ranking model and applied diversification afterwards.

Similar to traditional ranking problems, our initial approach is to build a point-wise ranking model. We get labels from user email feedback and human annotation. The model takes user features, engagement features (how frequently the user engaged on this journey through search, actions on Pins, etc.) and recency features. This provides a simple, immediate baseline.

To prevent the top ranked journeys from always being similar, we implement a diversifier after the journey ranking stage. The most straightforward approach is to apply a penalty if the journey is similar to the journeys that ranked higher (the similarity is measured using pretrained keyword embedding). For each journey i, score will be updated based on the formula below. Finally, we re-rank the journeys according to the updated score.

Occurrence is the number of similar journeys ranked before the current journey, and penalty is a hyperparameter to tune, usually chosen as 0.95.

Understanding a journey’s lifecycle helps us determine appropriate notification timing. We simplify this into two objectives:

The user journeys could be used in downstream applications for retrieval and ranking. The desired output is a list of distinct user journeys. Each journey should ideally be represented with:

Confidence Score: The confidence score for this predicted journey.

We aim to establish a robust evaluation and monitoring pipeline to ensure consistent and reliable quality assessment of top-k user journey predictions. Because human evaluation is costly and sometimes inconsistent, we leverage LLMs to assess the relevance of predicted user journeys. By providing user features and engagement history, we ask the LLM to generate a 5-level score with explanations. We have validated that LLM judgments closely correlate with human assessments in our use case, giving us confidence in this approach.

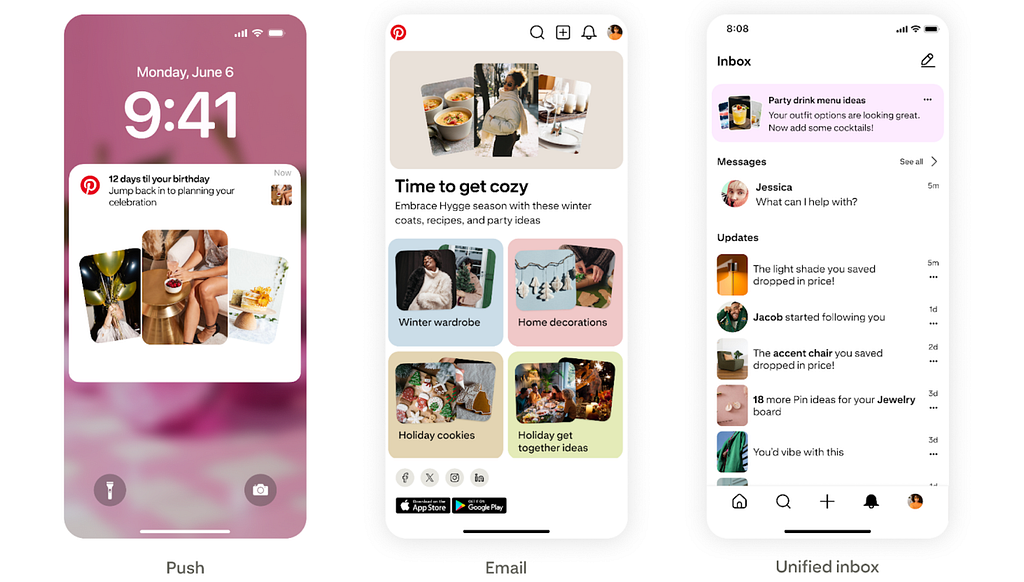

We applied user journeys inference to deliver notifications related to the user’s ongoing journeys. Our initial experiments demonstrate the significant impact of Journey-Aware Notifications¹:

As a follow up, we are working on leveraging large language models (LLMs) to infer user journeys given user information and activities, while offering several key benefits:

We tuned our prompts and used GPT to generate ground truth labels for fine-tuning Qwen, enabling us to scale in-house LLM inference while maintaining competitive relevance. Next, we utilized Ray batch inference to improve the efficiency and scalability. Finally, we are implementing full inference for all users and incremental inference for recently active users to reduce overall inference costs. All generated journeys will go through safety checks to ensure they meet our safety standards.

We’d like to thank Kevin Che, Justin Tran, Rui Liu, Anya Trivedi, Binghui Gong, Randy Tumalle, Tianqi Wang, Fangzheng Tian, Eric Tam, Manan Kalra, Mengtian Hu and Mengying Yang for their contribution!

Thanks Jeanette Mukai, Darien Boyd, Samuel Owens, Justin Pangilinan, Blake Weber, Gloria Lee, Jess Adamiak for the product insights!

Thanks Tingting Zhu, Shivani Rao, Dimitra Tsiaousi, Ye Tian, Vishwakarma Singh, Shipeng Yu, Rajat Raina and Randall Keller for the support!

¹Pinterest Internal Data, USA, April-May 2025

Identify User Journeys at Pinterest was originally published in Pinterest Engineering Blog on Medium, where people are continuing the conversation by highlighting and responding to this story.

Continue reading on the original blog to support the author

Read full articleOptimizing for sparse conversion events is a major challenge in ad tech. This architecture shows how to effectively combine sparse labels with dense engagement signals using parallel DCN v2 and multi-task learning to drive significant business value and advertiser RoAS.

Scaling ML models often leads to exponential costs. This approach demonstrates how architectural changes like request-level deduplication and SyncBatchNorm can decouple model complexity from infrastructure overhead, enabling massive scale-ups without proportional cost increases.

This article demonstrates how moving from heuristic-heavy re-ranking to sophisticated algorithms like SSD improves both system performance and long-term user retention. It highlights the importance of balancing immediate clicks with content diversity in large-scale recommendation engines.

This architecture demonstrates how to scale AI agent capabilities securely in an enterprise environment. By standardizing tool access via MCP and a central registry, Pinterest enables safe, automated engineering workflows while maintaining strict governance and security controls.