This approach demonstrates how to scale LLM-driven automation by replacing black-box fine-tuning with deterministic DSLs. It ensures reliability and debuggability for mission-critical workflows while significantly reducing the operational overhead of model maintenance.

By Shipra Shreyasi, Aniket Kumar, Manas Agarwal, and Pragya Kumari

In our Engineering Energizers Q&A series, we highlight the engineering minds driving innovation across Salesforce. Today we spotlight Shipra Shreyasi, a software engineering architect who directs the team enhancing natural-language-to-Flow creation within Agentforce. This empowers users to build production-ready Flow metadata from simple speech while managing automation at a scale surpassing 461 billion monthly executions.

Explore how Shipra’s team boosted natural-language-to-Flow precision by swapping fine-tuned models for a restricted, multi-level DSL framework, and how they maintained reliability across 63+ Flow varieties — including Screen Flows, UI elements, and unique actions — through specialized constraints and staged verification.

The team simplifies how you create, modify, and understand automation by using large language models to transform plain-language instructions into Flow metadata. This process allows you to deploy business logic directly into Flow Builder with the expectation that every automated task behaves exactly as intended.

Accuracy remains the central focus because Flows serve as vital operational assets. Since a Flow that fails to reflect your intent can introduce hidden errors, the team prioritizes several core requirements:

This perspective shifts Flow generation from a simple text task into a structured engineering solution. By applying explicit constraints and system-aware reasoning, the team helps you build sophisticated automations with minimal manual effort and high confidence in the final result.

Shipra shares what keeps her at Salesforce.

Fine-tuned models created accuracy hurdles that grew more obvious as Flow complexity increased. While these models produced valid metadata, they often missed the actual meaning behind a request. This meant a Flow could go live while failing to perform the specific tasks you originally described.

Adaptability also stayed out of reach. These models struggled to handle your unique customizations, such as custom Apex actions or specific HTTP callouts. This approach created several persistent issues:

Ultimately, these limitations made it difficult to enforce accuracy. Because the model operated as a monolith, the team could not determine if a failure happened during planning or the final generation. This lack of transparency prevented the system from delivering reliable, intent-aligned automation at a larger scale.

The architectural shift prioritizes deterministic results and eliminates hallucinations. While standard models often struggle with semantic drift and invalid data combinations, this new structure enforces strict rules for metadata and Flow types.

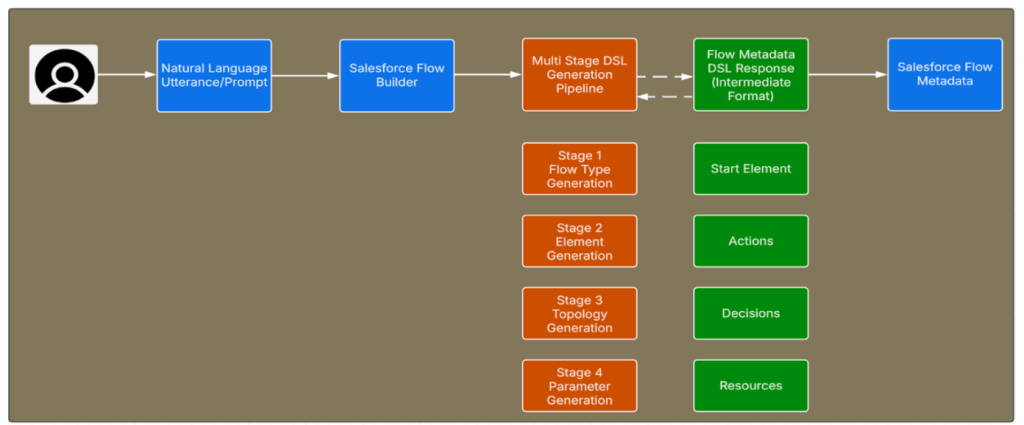

The team replaced the old monolithic approach with a modular, multi-stage pipeline. This system breaks the generation process into specialized phases with clear validation gates. A new Domain-Specific Language (DSL) defines exactly what the system can build, which stops invalid constructs before they ever exist.

The new model separates design from implementation through these methods:

This phased approach ensures accuracy through enforced constraints rather than trying to fix mistakes after the fact.

Natural Language Prompt to Agentforce for Flow Generation using Multi Stage DSL Generation Pipeline

Fine-tuned models created operational overhead that slowed innovation velocity. Supporting a new Flow type or fixing correctness issues required assembling datasets, retraining models, and moving through sequential testing environments. These steps meant even small changes often took months to reach users.

This slow cadence made it difficult to respond to evolving platform requirements. Accuracy improvements depended on model release timelines rather than engineering intent, while changing Flow schemas required repeated retraining cycles.

The team eliminated the need for retraining by moving to a DSL-based architecture with open-source large language models. This shift allows the team to address correctness fixes and schema changes through deterministic rule updates. Now, accuracy improves continuously instead of waiting for infrequent, high-risk releases.

Flow operates at a massive scale. It supports over 63 distinct Flow types and features schemas that evolve with every release. Each type carries its own execution semantics and start configurations, which previously made manual generation approaches far too brittle to maintain.

The team solved this by automating DSL generation directly from Flow metadata definitions. These constructs now derive programmatically from the Flow Metadata WSDL. This method ensures that generation rules reflect the platform schema at all times. As the platform introduces new features, the DSL evolves automatically.

Because the DSL pulls from authoritative metadata, the system stays aligned with actual runtime behavior. This change removes the risk of schema-drift errors. It also allows Flow generation accuracy to scale naturally alongside the platform.

Shipra spotlights her team’s favorite AI tools.

Complex Flow types present correctness challenges that go beyond static metadata. Screen Flows act as user interfaces, which demand accurate component selection and reactive behavior. Custom actions add another layer of difficulty by introducing specific semantics that models cannot reliably predict.

Start elements also function as polymorphic components. They contain fields that change depending on the Flow type. A single generation approach often fails to handle these variations, leading to incorrect or invalid configurations.

The constrained DSL architecture fixes this by enforcing specific rules at every stage. The pipeline selects valid elements and validates metadata in real time. It also calls dynamic APIs to resolve specific organization details. These steps ensure accuracy even in complex, UI-driven scenarios.

Shipra explains why engineers should join Salesforce.

Measuring accuracy requires more than simple observation. Manual reviews fail to scale, and basic indicators like successful saves do not prove that a Flow honors a user’s intent.

The team solved this by building an automated evaluation framework. This system uses hundreds of prompts and a Flow-as-a-Judge model to test results. The framework evaluates every generated Flow on three specific dimensions:

By running identical prompts through different methods, the team compared outcomes directly. The constrained DSL approach shows superior fidelity for complex types like Screen Flows. This framework provides the quantitative evidence needed to prove the architectural shift improves accuracy.

The post How Agentforce Achieved Accurate Flow Generation Across 461 Billion Monthly Executions Using a Constrained DSL appeared first on Salesforce Engineering Blog.

Continue reading on the original blog to support the author

Read full articleThis article demonstrates how to build scalable, autonomous AI agent systems that overcome infrastructure constraints like rate limits. It provides a blueprint for moving from LLM prototypes to production-grade systems that drive significant business value through automated workflows.

Managing shared infrastructure limits is critical when scaling LLM applications. This architecture demonstrates how to balance high-volume autonomous agents with human-in-the-loop workflows, ensuring fairness and prioritizing high-value tasks without hitting rate-limit failures.

Scaling AI agents for enterprise datasets requires balancing throughput with strict governance. This architecture shows how to overcome rate limits and latency issues while maintaining the explainability and security essential for autonomous CRM systems.

This architecture demonstrates how to solve data fragmentation and identity resolution at scale. By combining a centralized aggregation layer with Agentforce, engineers can automate complex manual workflows and provide real-time, accurate insights within existing business contexts.