This article demonstrates how to build scalable, autonomous AI agent systems that overcome infrastructure constraints like rate limits. It provides a blueprint for moving from LLM prototypes to production-grade systems that drive significant business value through automated workflows.

In our Engineering Energizers Q&A series, we highlight the engineering minds driving innovation across Salesforce. Today, we spotlight Rajas Mhatre, Senior Director of Software Engineering at Salesforce, who leads the development of autonomous engagement agents that transform sales operations by generating over $100 million in pipeline, creating more than 10,000 opportunities, and contributing to 1,500 closed deals through automated workflows.

Explore how the team managed high-volume inbound leads in real time under strict rate limits while coordinating distributed agents to prevent duplication and data inconsistencies across fragmented sales data and engagement workflows.

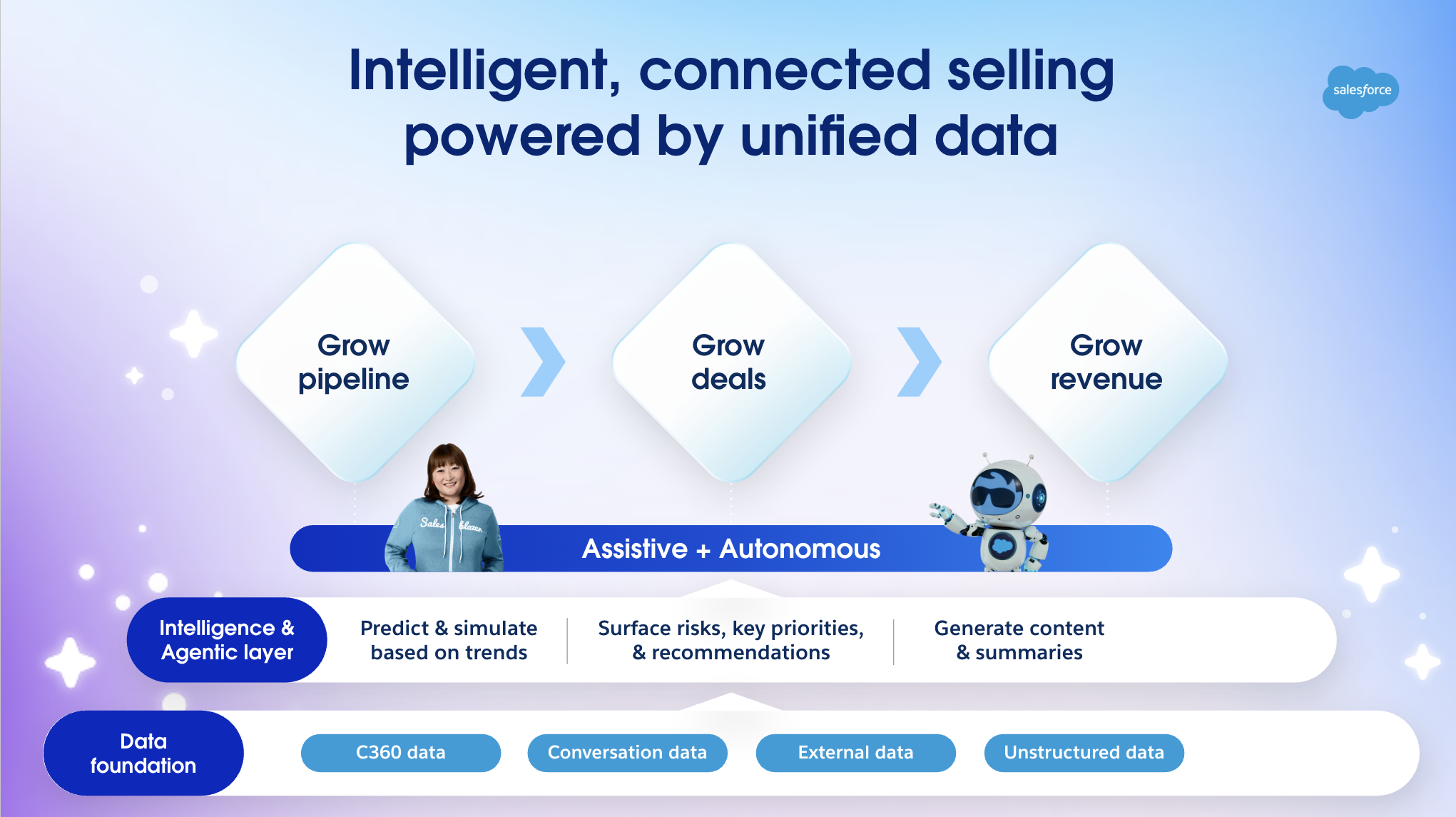

The team transforms Sales Cloud from a stagnant record system into a proactive engine driven by autonomous Lead Nurturing agents. While traditional CRM systems only capture data after the fact, these new agents take the lead on the actual work.

The team embeds Agentforce-powered agents directly into the sales workflow to act as digital team members. These agents monitor inbound signals and execute outreach, qualification, and scheduling without needing a person to start the process.

This shift enables organizations to generate pipeline at scale through continuous execution. Every lead receives an immediate response, which eliminates gaps in human availability and keeps the sales process moving at all times.

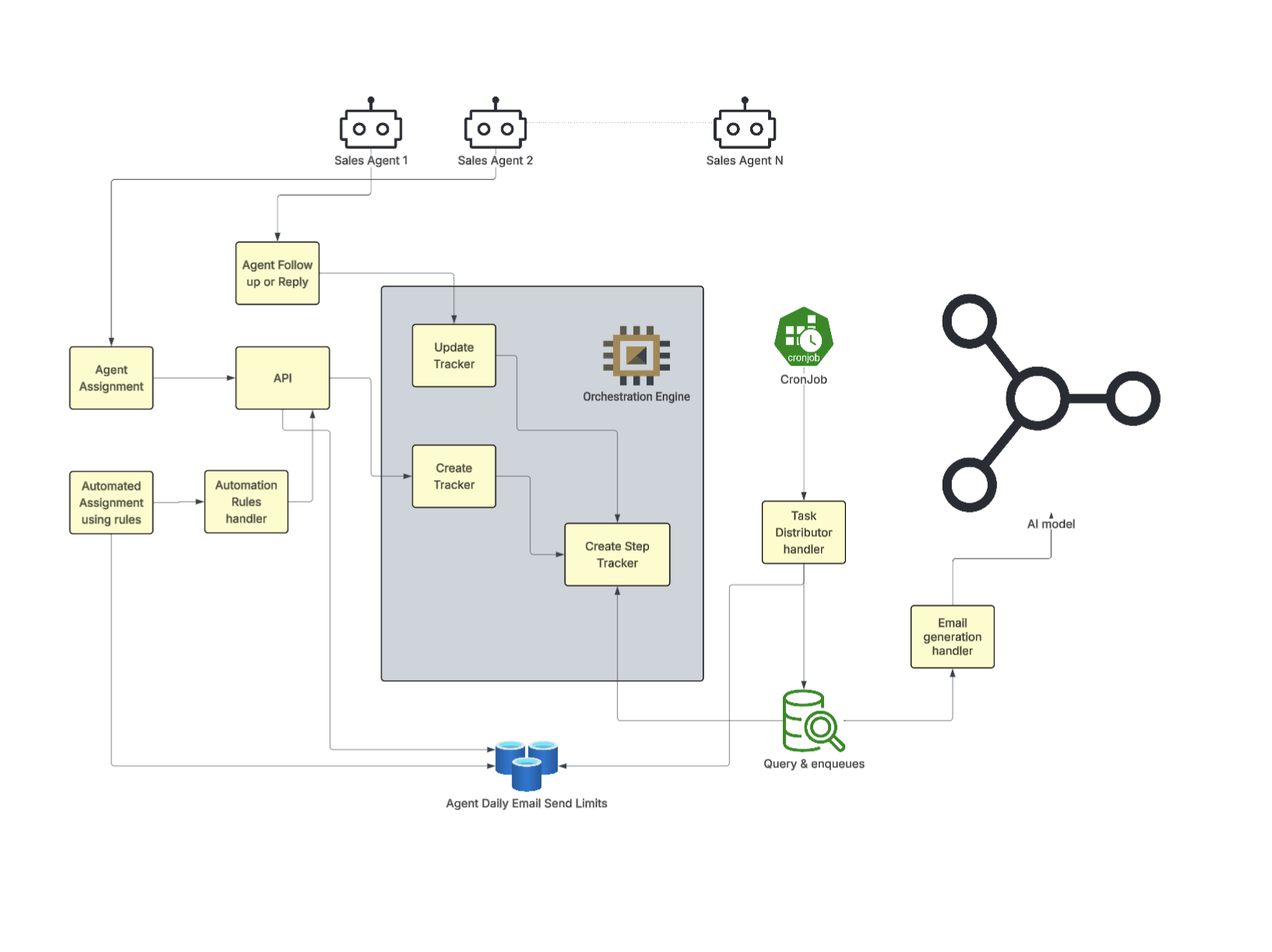

This architecture allows the platform to coordinate AI agents and human workflows in a unified system that preserves reliability and fairness. Consequently, customers can assign large volumes of outreach without calculating throughput because the queue continuously regulates dispatch across all execution contexts.

A look at how the team agentify sales cycles.

The primary constraint stems from a mismatch between high-volume lead generation and the limited capacity of human-driven workflows. While marketing systems operate at a programmatic scale, manual sales processes often struggle to keep pace with inbound demand.

To bridge this gap, the team re-architected lead engagement using autonomous Lead Nurturing agents within the Agentforce platform. The system now ingests inbound signals and executes outreach, qualification, and follow-up in real time rather than waiting for human triggers.

This transition required building deterministic workflows on top of LLM-driven generation to ensure responses remain consistent and contextually relevant. The system integrates directly with email and calendar infrastructure to support end-to-end engagement.

By shifting to system-driven orchestration, the team eliminated response latency. This creates a scalable engagement model that aligns with modern lead volume and velocity.

Engineering validation in a customer-zero environment proves the system operates reliably at an enterprise scale. The internal sales organization at Salesforce provided a high-volume testbed to validate multi-agent orchestration and throughput under production conditions.

This architecture generated over $100 million in pipeline and created more than 10,000 opportunities. It contributed to 1,500 closed deals within the SMB segment and sourced thousands of meetings through continuous automated engagement.

A critical outcome involves the processing of leads that previously remained idle due to human capacity limits. The agent continuously ingests this volume and converts those leads into active pipeline.

The results validate that the architecture scales horizontally and maintains throughput under load. It delivers measurable business outcomes without requiring additional human resources.

A look inside the multi-agent strategy.

The engineering challenge centered on enforcing deterministic coordination across multiple agents while navigating strict rate limits and provider constraints. Without a centralized control plane, early iterations saw failure rates between 50% and 60% due to system overloads and duplicate engagements.

The solution involved building a distributed persistent queue to manage the execution layer. This architecture ensures the system engages each lead exactly once and maintains task ownership across the environment.

A three-tier priority model governs the workflow by processing replies first, followed by outreach and nudges. Round-robin scheduling and batching techniques allow the system to adjust throughput dynamically, staying within the functional limits of the underlying LLMs and email infrastructure.

This orchestration layer provides the necessary reliability for high-throughput operations. It prevents the chaos of uncoordinated execution and ensures the system maintains steady performance under heavy enterprise loads.

Building trust in autonomous agents requires rigorous control over how systems generate and execute responses. Because these agents manage customer-facing workflows, any inconsistency or inaccuracy directly threatens brand reputation and engagement quality.

The primary difficulty involves balancing the creative flexibility of a large language model with the need for predictable, deterministic behavior. Unchecked generation often leads to hallucinations or messaging that drifts away from approved positioning.

The strategy focuses on constraining responses to predefined workflows and structured outputs. Rather than allowing open-ended generation, the system grounds every response in specific context, such as previous interactions and engagement signals. This approach limits variability and keeps every conversation aligned with actual product capabilities.

By integrating controlled generation with contextual grounding, the architecture ensures that autonomous engagements remain reliable and brand-safe at scale.

Integrating fragmented data sources into a single system remains the primary challenge for end-to-end sales decision-making. Lead signals and activity history often live in different areas like the CRM and external systems, which creates inconsistent workflows.

The team solved this by building a unified data graph. This graph pulls together various signals into one clear customer state. Ingestion pipelines now normalize this data and provide a consistent interface for the agent to use.

The system uses this unified context to guide decisions during lead generation, nurturing, and scheduling. Every stage functions as a continuous pipeline where the agent tracks previous interactions and state changes. Centralizing this context within the execution layer allows for smooth orchestration across the entire engagement lifecycle.

Bringing it all together on a unified data layer.

Voice interactions create real-time demands that differ from asynchronous channels. Systems must generate responses instantly without the benefit of delayed reasoning or manual review.

The architecture manages interruptions and unpredictable flows while keeping conversations coherent. Unlike email, voice requires immediate context to reflect previous interactions accurately.

Encoding specific personality traits like tone and adaptability into the agent presents another hurdle. This process involves training on actual interaction data and refining behavior through consistent feedback loops.

The team continues to iterate on conversation design using live data to improve realism. These efforts aim to build voice agents that foster trust and drive engagement during live calls.

The post How Agentforce Lead Nurturing Agents Generated $100M+ Pipeline Under Rate-Limited Infrastructure appeared first on Salesforce Engineering Blog.

Continue reading on the original blog to support the author

Read full articleScaling AI agents for enterprise datasets requires balancing throughput with strict governance. This architecture shows how to overcome rate limits and latency issues while maintaining the explainability and security essential for autonomous CRM systems.

This architecture demonstrates how to solve data fragmentation and identity resolution at scale. By combining a centralized aggregation layer with Agentforce, engineers can automate complex manual workflows and provide real-time, accurate insights within existing business contexts.

This demonstrates how to solve data fragmentation across distributed systems. By integrating AI agents with a centralized aggregation layer, engineers can automate high-latency manual workflows while staying within strict API and performance limits.

This architecture solves the statelessness problem in AI agents, enabling long-term context and reliability at scale. It provides a blueprint for building governable, auditable AI systems that maintain user trust while reducing prompt noise and latency through structured memory layers.