This article highlights how a decade-long partnership between Microsoft and Red Hat has driven significant advancements in hybrid cloud, open source, and AI. Engineers can learn about integrated platforms like ARO, cost-saving benefits, and tools for modernizing applications and scaling AI.

Ten years ago, Microsoft and Red Hat began a partnership grounded in open source and enterprise cloud innovation. This year, we celebrate a decade of collaboration. Our journey together has helped customers accelerate hybrid cloud transformation, empower developers to innovate, and strengthen the open source community to drive modern application innovation.

In 2015, running mission-critical Linux workloads on Microsoft Azure was considered bold and visionary. Ten years later, our partnership with Red Hat has helped thousands of organizations worldwide accelerate digital transformation, set new benchmarks in open innovation, and advance the cloud-native movement for enterprises everywhere.

Together, we introduced Red Hat Enterprise Linux (RHEL) on Azure, setting a new precedent for innovation in the cloud. This collaboration deepened with the addition of Red Hat offerings, including Azure Red Hat OpenShift (ARO)—a fully managed, jointly engineered, and supported application platform that combines cloud scale with open source flexibility.

Red Hat and Microsoft’s global footprint and expanding customer base underline how an open approach and commitment to solving customer challenges drives adoption and innovation at scale.

Azure Red Hat OpenShift and Red Hat’s automation platforms are powering digital transformation for global leaders across industries:

Together, Microsoft and Red Hat have advanced the industry with major accomplishments:

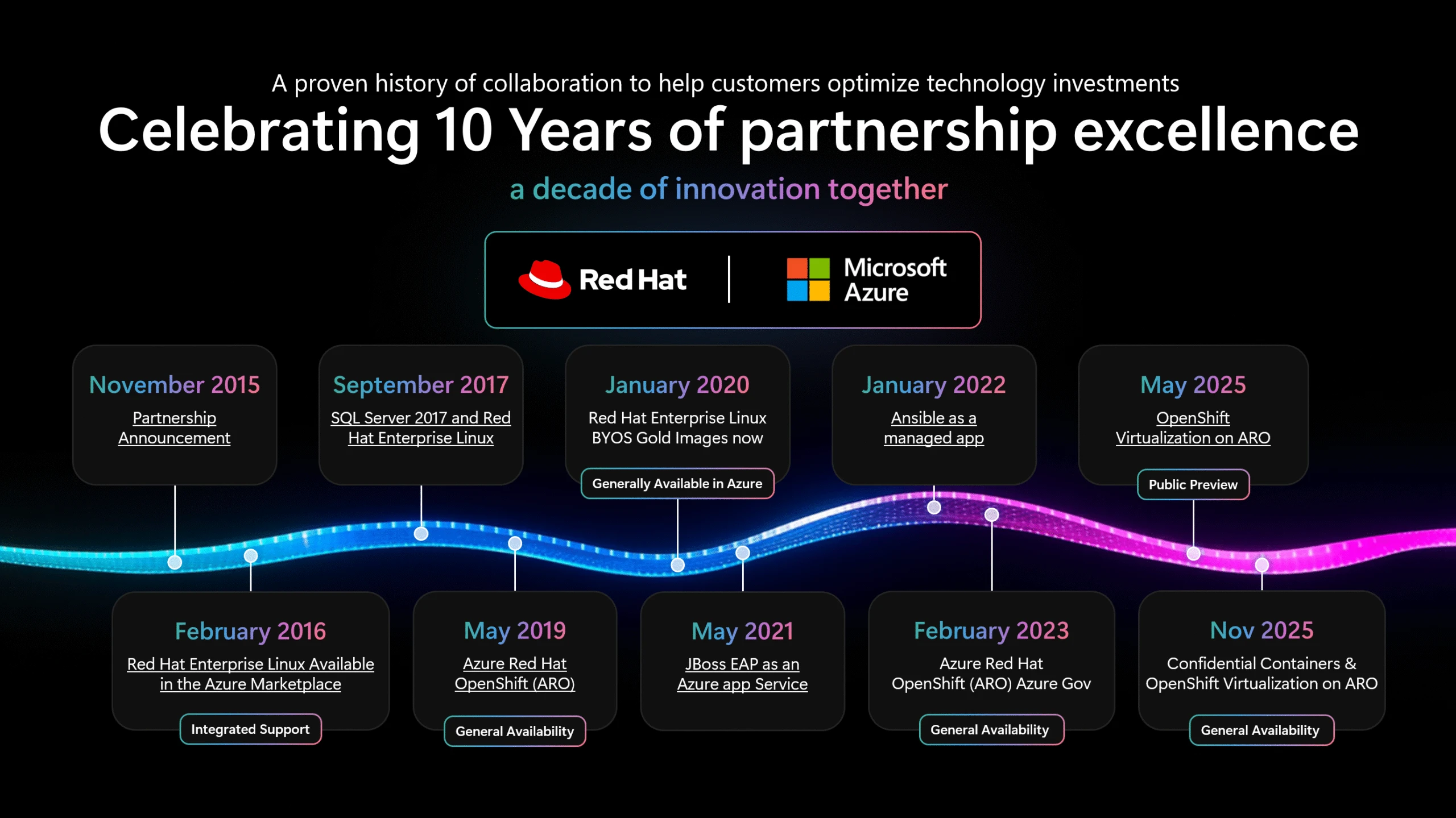

The partnership’s journey is marked by major shared milestones, summarized in the timeline graphic below:

A defining moment of our tenth anniversary was the GA of OpenShift Virtualization on Azure Red Hat OpenShift, announced at Microsoft Ignite 2025. Organizations can now run VMs alongside containers on a single, secure platform, seamlessly bridging traditional virtualization with cloud-native innovation. Enterprises can modernize their VM workloads into Kubernetes-based environments, leveraging Azure’s performance and security with familiar OpenShift tools.

In addition, Microsoft Ignite 2025 marked the GA of confidential containers on Azure Red Hat OpenShift, delivering enhanced hardware-enforced security and isolation for container workloads. The event also showcased alongside the GA of Red Hat Enterprise Linux (RHEL) for HPC on Azure, offering a secure, high-performance platform tailored for scientific and parallel computing workloads in Azure.

Together, these announcements underscore our ongoing commitment to hybrid innovation, security, and helping customers to deploy a wide spectrum of enterprise workloads with agility and confidence.

Ten years of partnership have proven openness is more than a technological strategy—it is a culture of progress, trust, and shared innovation. Microsoft and Red Hat remain committed to pioneering the future of hybrid cloud and AI-powered applications, always keeping customer choice and reliability at the center.

We’re proud to partner with Red Hat not just to support our customers, but also in our shared interest in projects like the Linux Kernel, Kubernetes, and most recently llm-d. Together, we are committed to continuing contributions to the health and success of open source technologies and communities.

To our customers, partners, and open source communities: thank you for partnering with us on this journey. Together, we will continue to build the future of enterprise technology—openly, boldly, and collaboratively.

—Brendan Burns, Corporate Vice President, Microsoft Cloud Native

The post A decade of open innovation: Celebrating 10 years of Microsoft and Red Hat partnership appeared first on Microsoft Azure Blog.

Continue reading on the original blog to support the author

Read full articleMaia 200 represents a shift toward custom first-party silicon optimized for LLM inference. It offers engineers high-performance FP4/FP8 compute and a flexible software stack, significantly reducing the cost and latency of deploying massive models like GPT-5.2 at scale.

As cloud complexity outpaces human capacity, agentic operations allow engineers to move from manual toil to high-level orchestration. By automating context-aware diagnosis and remediation, teams can maintain reliability and efficiency at the scale required for modern AI workloads.

As AI workloads drive unprecedented power demands, traditional copper infrastructure faces efficiency and space limits. HTS technology offers a path to lossless power delivery and higher density, enabling sustainable scaling of next-generation datacenter architecture.

PostgreSQL is evolving into a central hub for AI development. By integrating vector search, LLM orchestration, and seamless IDE workflows directly into the managed database service, Microsoft reduces the friction of building and scaling intelligent, data-driven applications.