As cloud complexity outpaces human capacity, agentic operations allow engineers to move from manual toil to high-level orchestration. By automating context-aware diagnosis and remediation, teams can maintain reliability and efficiency at the scale required for modern AI workloads.

Cloud operations have reached an inflection point. For more than a decade, the industry has focused on scale—more infrastructure, more data, more services, more dashboards to build and manage both infrastructure and applications. While today’s cloud delivers extraordinary flexibility, the rapid growth of modern applications and AI workloads has introduced levels of scale and complexity that traditional operations were not designed for.

As modern applications and AI workloads expand in scale, speed, and interconnectedness, operational demands are evolving just as quickly. Organizations are now looking for an operating model that builds on their existing practices—one that brings intelligence into the flow of work and translates the constant stream of signals into coordinated action across the cloud lifecycle.

Macro trends are pointing towards major shifts in operations. In the era of AI, workloads can move from experimentation to full production in weeks, making constant change the new norm. Infrastructure and applications are continuously updated, scaled, and reconfigured. Telemetry now streams from every layer—health, configuration, cost, performance, and security—while programmable infrastructure enables action at machine speed. At the same time, AI agents are emerging as practical operational partners—able to correlate signals, understand context, and take action within defined guardrails. Together, these shifts are driving the need for a new operating model—one where operations are dynamic, context-aware, and continuously optimized rather than reactive and manual.

Agentic cloud operations brings this model to life by enabling teams to harness AI-powered agents that infuse contextual intelligence into everyday workflow. These agents help accelerate development, migration, and optimization by connecting operational signals directly to coordinated action across the lifecycle. They bring people, tools, and data together, so insights don’t stay passive—they become execution. The result is faster performance, reduced risk, and cloud operations that improve over time instead of falling behind as complexity grows.

Azure Copilot brings agentic cloud operations to life as the agentic interface for Azure. Rather than adding yet another dashboard, it delivers a unified, immersive experience grounded in a customer’s real environment—subscriptions, resources, policies, and operational history. Teams can work through natural language, chat, console, or CLI, invoking agents directly within their workflows. A centralized management environment brings observability, configuration, resiliency, optimization, and security together—enabling operators to move seamlessly from insight to action in one place.

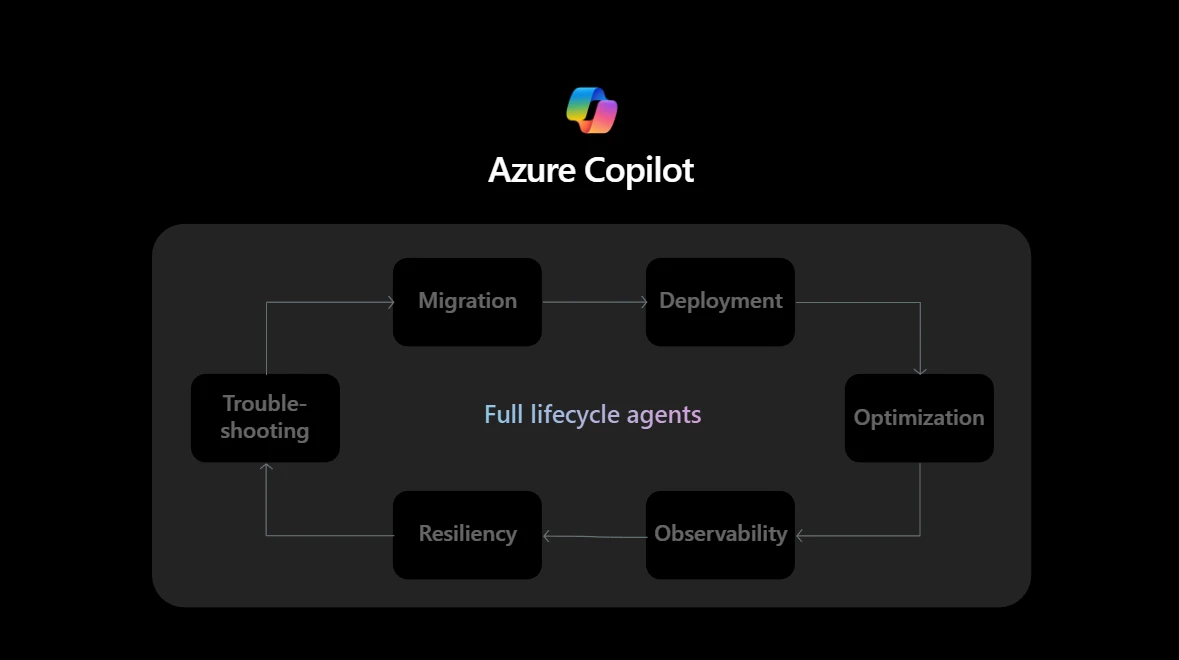

At Ignite, we unveiled the agentic capabilities of Azure Copilot. These capabilities span key operational domains—migration, deployment, optimization, observability, resiliency, and troubleshooting—each designed to bring contextual intelligence into the flow of work. Azure Copilot correlates signals, understands operational context, and takes governed action where it matters. Rather than functioning as discrete bots, they operate as a coordinated, context-aware system that continuously strengthens cloud operations.

Azure Copilot and agents helps teams start with clarity and confidence. Copilot migration agent can assist with discovering existing environments, mapping application and infrastructure dependencies, and identifying modernization paths before workloads move. Deployment agent then guides well-architected design and generate infrastructure as code artifacts that set strong operational patterns from the outset. In parallel, resiliency agent identifies gaps across availability, recovery, backup, and continuity—so reliability is designed in, not pathed later.

When teams are ready to go live, Copilot deployment agent support governed, repeatable deployment workflows that validate both infrastructure and application rollout. Observability agent establishes baseline health from the moment production traffic hits, while troubleshooting agent accelerates early-life issue resolution by diagnosing root causes, recommending fixes, and initiating support actions if needed. Throughout this phase, resiliency agent verifies that recovery and failover configurations hold up under real world conditions.

In ongoing operations, Azure Copilot’s agentic capabilities deliver compounding value. Observability agent provides continuous, full stack visibility and diagnosis across applications and infrastructure. Optimization agent identify and execute improvements across cost, performance, and sustainability—often comparing financial and carbon impact in real time. Resiliency agent moves from validation to proactive posture management, continuously strengthening protection against emerging risks such as ransomware. Troubleshooting agent helps make the shift from reactive firefighting to rapid, context aware incident resolution. Last but not least, migration agent reenters the lifecycle to identify new opportunities to refactor or evolve workloads—not as a onetime event, but as continuous modernization.

In ongoing operations, Azure Copilot’s agentic capabilities deliver compounding value. Observability agent provides continuous, full stack visibility and diagnosis across applications and infrastructure. Optimization agent identifies and executes improvements across cost, performance, and sustainability—often comparing financial and carbon impact in real time. Resiliency agent moves from validation to proactive posture management, continuously strengthening protection against emerging risks such as ransomware. Troubleshooting agent helps make the shift from reactive firefighting to rapid, context aware incident resolution. Last but not least, migration agent reenters the lifecycle to identify new opportunities to refactor or evolve workloads—not as a onetime event, but as continuous modernization.

These capabilities don’t operate as isolated bots. They work within connected, context-aware workflows—correlating real time signals, understanding operational context, and taking governed action where it matters most. This allows teams to anticipate issues earlier, resolve them faster, and continuously improve their cloud posture across development, migration, and operations. The outcome isn’t fewer tools—it’s better flow, where people, data, and automation operate as a unified system.

Agentic cloud operations are built for mission-critical systems, where governance and control are nonnegotiable. Azure Copilot embeds governance at every layer, allowing enterprises to define boundaries, apply policies consistently, and maintain clear oversight. Features such as Bring Your Own Storage (BYOS) for conversation history give customers even greater control—keeping operational data within their own Azure environment to ensure sovereignty, compliance, and visibility on their terms. All of this is grounded in Microsoft’s Responsible AI principles, ensuring autonomy and safety advance together. Every agent-initiated action honors existing policy, security, and RBAC controls. Actions are always reviewable, traceable, and auditable, ensuring human oversight remains central to automated workflows—not removed from them.

As cloud environments grow more dynamic and complex, operational models must evolve to match them. With Azure Copilot and agentic cloud operations, Microsoft is enabling organizations to operate mission-critical environments with greater speed, clarity, and control—providing the confidence to move forward as the cloud continues to change.

Access white paper on Intelligent Operations: How Agentic AI Is Aiming to Reshape IT.

Find resources, use cases, and get started with Azure Copilot.

The post Agentic cloud operations: A new way to run the cloud appeared first on Microsoft Azure Blog.

Continue reading on the original blog to support the author

Read full articleAs AI workloads drive unprecedented power demands, traditional copper infrastructure faces efficiency and space limits. HTS technology offers a path to lossless power delivery and higher density, enabling sustainable scaling of next-generation datacenter architecture.

Maia 200 represents a shift toward custom first-party silicon optimized for LLM inference. It offers engineers high-performance FP4/FP8 compute and a flexible software stack, significantly reducing the cost and latency of deploying massive models like GPT-5.2 at scale.

Engineers must balance speed-to-market with customizability. This ecosystem simplifies the 'build vs. buy' decision by providing pre-vetted models and agents that integrate with existing stacks while ensuring governance and cost optimization through cloud consumption commitments.

Azure's proactive infrastructure design ensures engineers can deploy next-gen AI models on NVIDIA Rubin hardware immediately. By solving power, cooling, and networking bottlenecks at the datacenter level, Microsoft enables massive-scale AI training and inference with minimal friction.