Quantum computing threats like Store Now, Decrypt Later jeopardize current encryption. Meta’s framework provides a scalable roadmap for organizations to transition to PQC standards, ensuring long-term data security without compromising system performance or incurring excessive costs.

Research indicates that quantum computers will eventually break conventional public-key cryptography, creating security risk for many digital systems across industry. Although experts estimate this could happen within 10–15 years, sophisticated adversaries could collect encrypted data today, anticipating a future where quantum computers can decrypt it — a strategy known as “store now, decrypt later” (SNDL). This means sensitive information could be eventually at risk even if quantum computers are still years away.

Recognizing this threat, organizations like the US National Institute of Standards and Technology (NIST) and the UK’s National Cyber Security Centre (NCSC) have published migration guidance that discusses target timeframes (including 2030) for prioritizing post-quantum protections in critical systems. This guidance recognizes that complexity and missing or incomplete technical capabilities are important factors impacting PQC migration plans.

For example, the first industry-wide PQC standards, such as ML-KEM (Kyber) and ML-DSA (Dilithium), have now been published by NIST, with additional algorithms like HQC on the way. Notably, Meta cryptographers are co-authors of HQC, one of the newly selected PQC algorithms, reflecting our commitment to advancing global cryptographic security. These standards provide organizations with robust options for defending against SNDL attacks, and Meta seeks to share relevant progress and insights to help the broader community navigate the transition to a PQC-secure future.

At Meta we have taken a proactive approach to ensure that we are prepared to meet the threat challenges posed by quantum computers and SNDL. With billions of people around the globe relying on our platforms and applications every day, we continue to maintain strong security and data protection standards. As part of this, we have already begun deploying and rolling out post-quantum encryption across our internal infrastructure over a multi-year process to ensure that we uphold our security and privacy commitments now and into the future.

We’ve adopted a robust and comprehensive PQC migration strategy that aspires to the following principles to ensure a seamless transition:

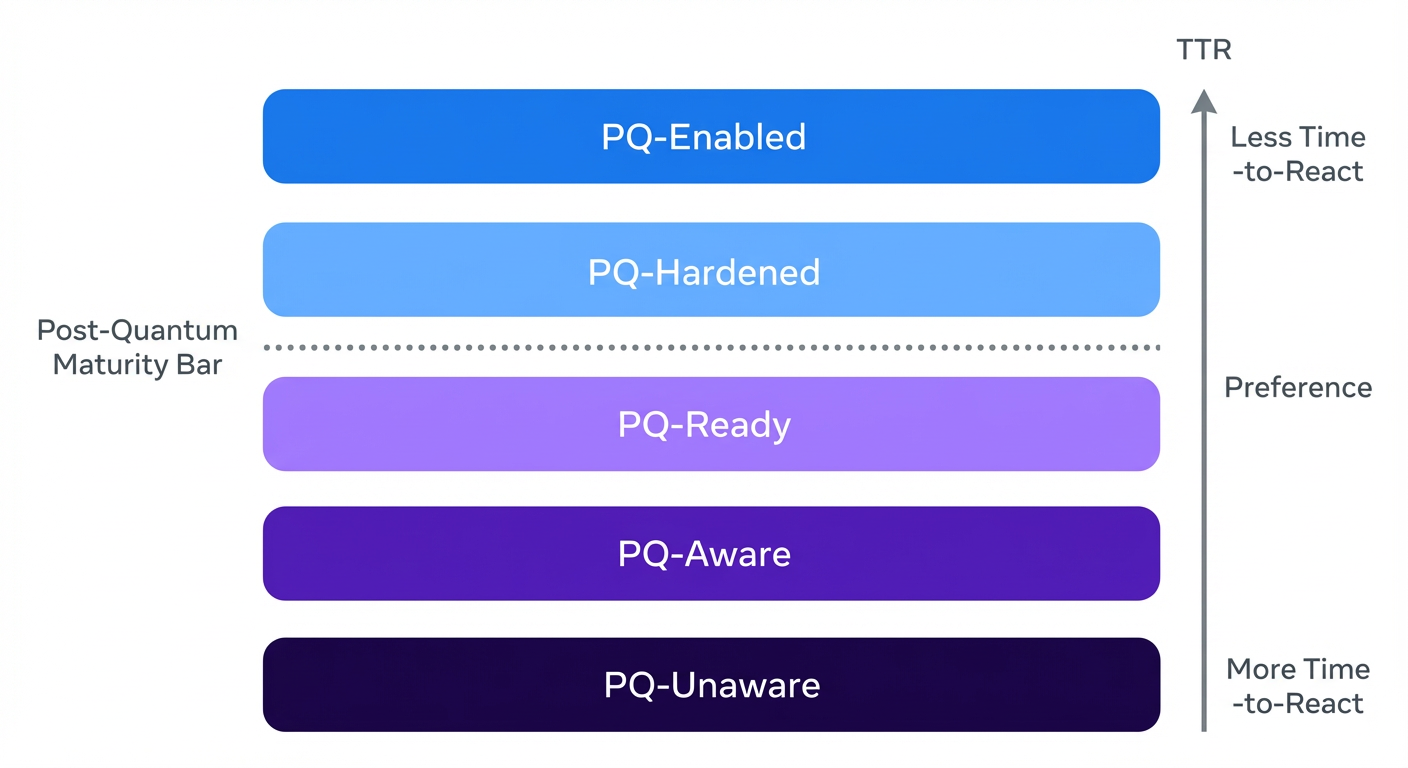

PQC migration is a gradual, complex, multi-year process. It can be helpful to think about PQC migration in terms of what we call PQC Migration Levels. The levels are laddered in terms of how rapidly they allow an organization to respond to a quantum threat. The shorter the time to react to a relevant quantum event the better. A relevant quantum event can be related to advancements in quantum computing development, standards publications, or the establishment of new industry practices.

PQ-Enabled, the level at which full quantum protection is effectively achieved, is the platinum standard that organizations should aim for each one of its applications and use cases. However, any organization looking to increase its resilience to quantum threats can take steps on its way to PQ-Enabled. Even starting the migration process by setting the level of minimally acceptable success at PQ-Ready may have benefits. At this level companies that may not have budgeted for near-term enablement can feel motivated (and rewarded) for building the necessary building blocks to complete risk mitigation in the future.

The proposed strategy is defined as a sequence of steps. Some of them may overlap in time with others but the goal here is to give an indication about the different workstreams organizations may have to embark on as part of their PQC readiness.

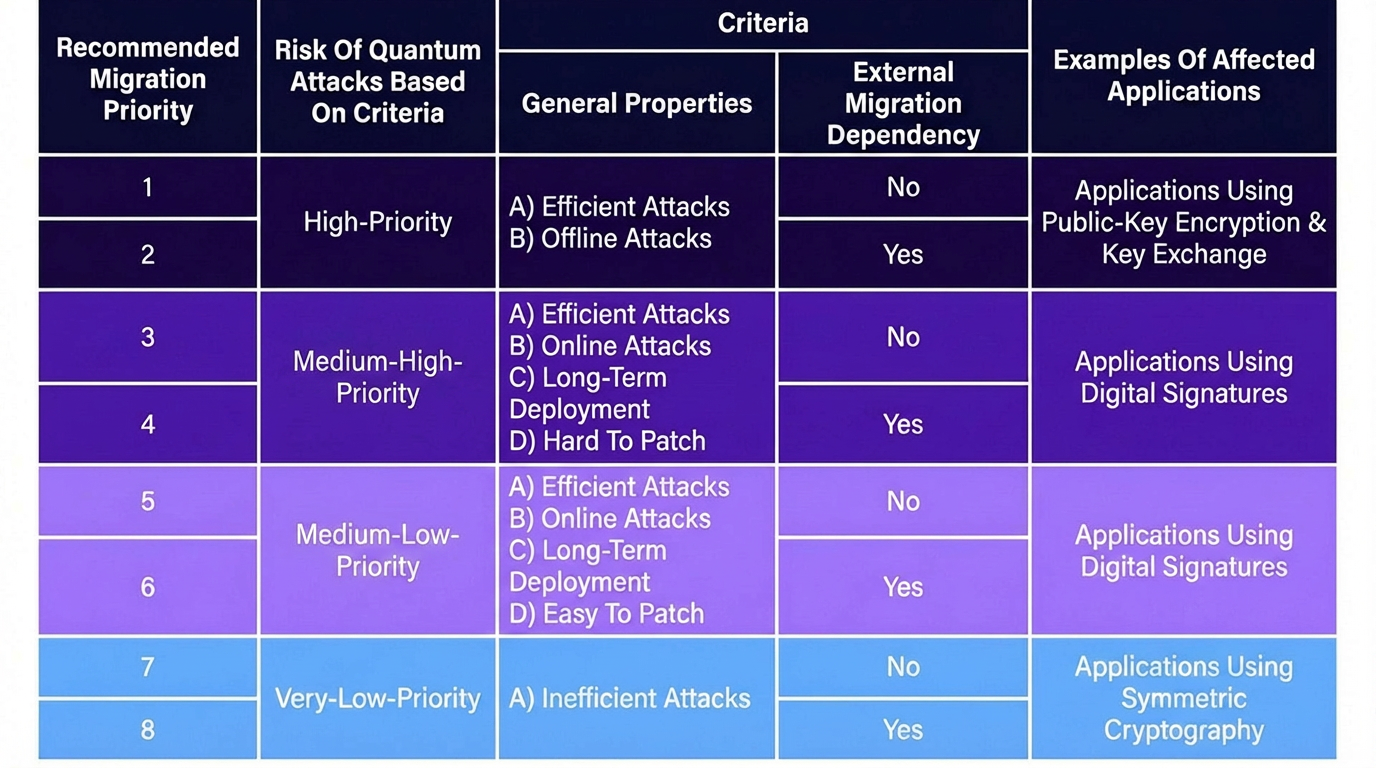

We have created a criteria that allows us to classify any application into different prioritization levels. To this end, we analyze various aspects that influence such a prioritization.

Susceptible to attacks that can be initiated now without the existence of a quantum computer (offline attacks) and efficiently completed later (SNDL attack by means of Shor’s algorithm). Any application using quantum vulnerable public-key encryption and key exchange primitives falls into this category. Among high-risk applications, we differentiate the ones that have no external dependencies (can be migrated right away), from the ones that have external dependencies and thus may need to wait until these dependencies are resolved.

Susceptible to attacks that can only be initiated with a quantum computer in the future when a sufficiently powerful quantum computer is available (online attacks) and which will be efficiently performed (Shor’s algorithm).

We differentiate these two categories based on their capability of upgrading their security mechanism: Medium-high risks are hard to patch (e.g., applications that have public keys baked into hardware) and medium-low risks are those that are possible to patch (e.g., software upgrades). The patching capability is particularly relevant for applications with long lifespans (i.e., time for development + time deployed in the field). Any application using quantum vulnerable digital signatures falls into this category.

Susceptible to inefficient quantum attacks only (Grover’s attack). As presented in many academic publications (e.g., Gheorghiu and Mosca, 2025), the enormous resource requirements to run such an attack (which even raises doubts about whether such an attack will ever be feasible) make them the lowest risk. Any application using symmetric cryptography with inadequate parameters falls into this category.

The table below summarizes the proposed criteria.

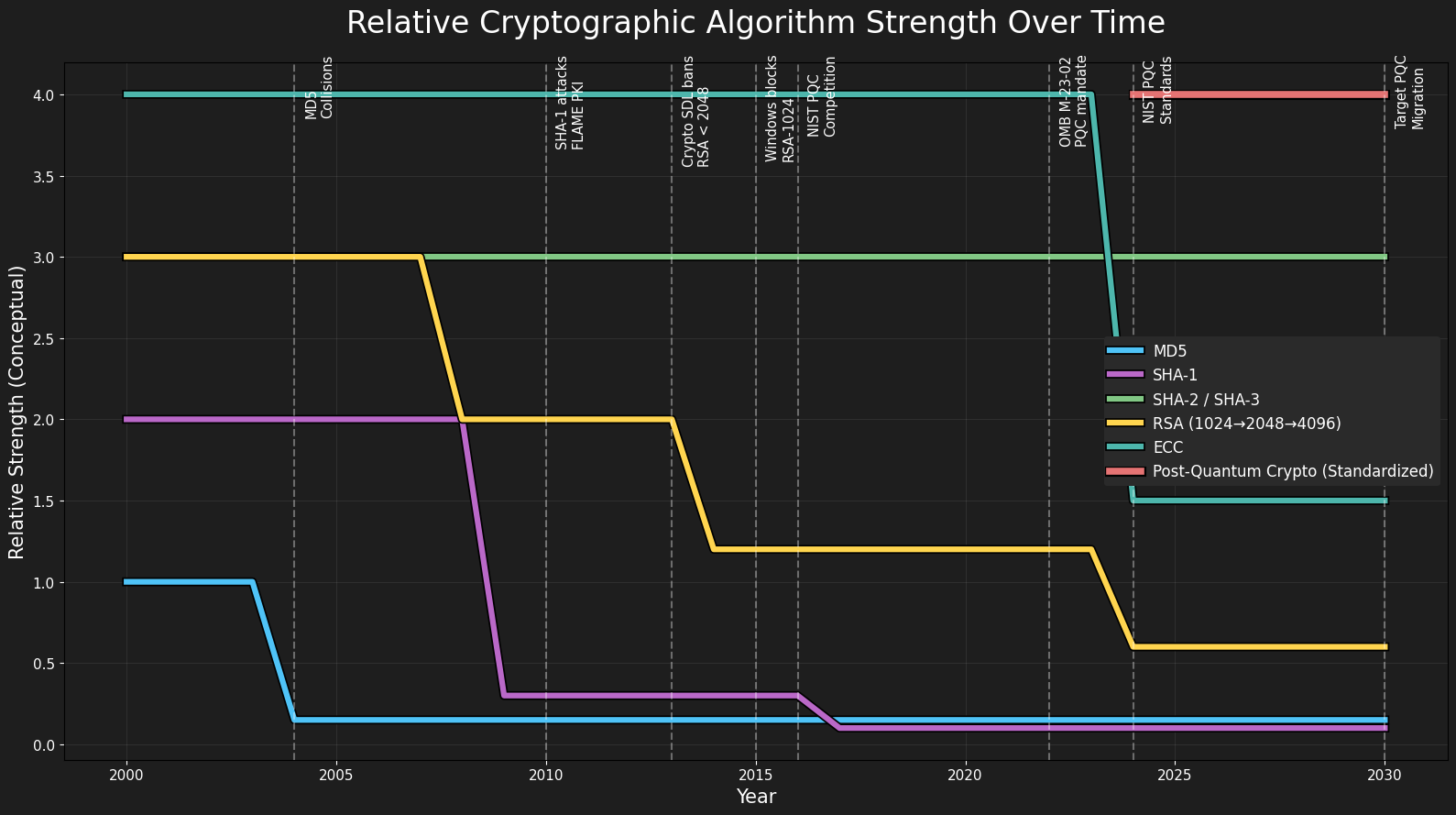

Cryptography algorithms’ strength decays with time, as depicted in the chart below. Since the inception of cryptography, we have seen multiple ciphers and algorithms rise and fall with regards to security and adoption rate. The continuous need to replace cryptographic algorithms requires at the very minimum an understanding of where cryptography is being used. The problem is cryptography is ubiquitous, and finding all instances of a cryptographic primitive in a large infrastructure and codebase is inherently challenging.

The process of mapping all the usages of cryptography within an organization is called Crypto Inventorying. For a company-wide PQC migration strategy, it represents a critical prerequisite for the completion of the work. This can be built applying two complementary strategies.

Besides the organization’s commitment, the PQC migration is a process that requires certain external prerequisites to be met. Next, we describe what needs to be unblocked so that a company-wide PQC migration is feasible. We also identify the main unblocking actors – the organization itself is always highly encouraged to engage in these processes.

| Dependency | Main Unblocking Actor |

|---|---|

| Community-vetted PQC standards | Standardization Bodies (NIST, IETF, ISO, etc) |

| PQC Support in Hardware | HSM, CPU, and other Hardware Vendors |

| Production-level PQC implementations | Crypto Engineering Community |

The cryptography community has been actively participating in the PQC standardization processes. As a result, NIST has recently published the first PQC standards: FIPS 203, FIPS 204, and FIPS 205, and announced a second list of algorithms to be standardized. Meta has been actively contributing, co-authoring some of the standardized algorithms (e.g., HQC), and a few other candidates (e.g., BIKE and Classical McEliece).

In addition, IETF has also published two RFCs specifying PQC schemes, and ISO another PQC standard. Many other standards are still needed, in particular those targeting higher-layer protocols. There are some preliminary drafts being written such as IETF Draft #1 and IETF Draft #2. They address specific components of the TLS mechanism (e.g., the key encapsulation step). A considerable amount of work is still needed to finalize the drafts and cover other portions of the TLS stack, such as PQC X.509 certificates and PQC PKI in general. Most Meta products rely on TLS so lifting this roadblock is of utmost importance to effectively protect our systems.

In some instances, organizations may have dependencies on external hardware vendors because some applications rely on hardware support (e.g. HSM and CPU). In cases like this it’s important that organizations align with their vendors as they plan for PQC migration. Meta, for example, is working closely with its hardware vendors on community-vetted, standardized PQC strategies.

Most cryptography-related vulnerabilities are not due to any flaws in the algorithms, but instead in their implementations, which may contain bugs or subtle side-channel vulnerabilities. Getting all these things right isn’t trivial. The good news is that the cryptography community has already been working on this front for quite some time.

Since 2019, the Open Quantum Safe consortium, part of the Linux Foundation Post-Quantum Cryptography Alliance, has been developing LibOQS, a PQC cryptography library. LibOQS is starting to be integrated by industry organizations. Meta supports and is actively working with LibOQS leads, including fixing bugs in the library and continuously providing feedback. We’re committed to continuing fostering these strategic collaborations.

Post-quantum cryptography is a comparatively new field, and therefore organizations should not deviate from what reputable public standardization bodies are recommending. As mentioned above, the NIST PQC Standardization competition has recently published the first PQC standards. For building the underlying PQC secure building blocks, we encourage organizations to consider adopting the NIST PQC selected algorithms, namely:

In addition to the algorithms listed above, NIST also selected two additional signature algorithms: SPHINCS+ and Falcon. Compared to Dilithium, the former has considerably larger signature sizes while the latter requires float pointing arithmetic. These drawbacks make their adoption considerably harder than the (already somewhat challenging) deployment of ML-DSA.

In terms of security strength, both ML-KEM and ML-DSA are defined with different parameter sets, each parameterization offering a different performance x security profile. For ML-KEM, in general we suggest teams to consider adopting ML-KEM768 achieving NIST Security Level 3, although exceptions can be granted for ML-KEM512 achieving NIST Security Level 1 (as endorsed by NIST PQC FAQ) in case ML-KEM768 performance is prohibitive for a particular use case. The same applies for ML-DSA, preference for ML-DSA65 but exceptions could be allowed for ML-DSA44 considering performance constraints.

HQC has been recently selected by NIST for standardization. It is developed based on different math than ML-KEM, which is important if weaknesses are discovered in ML-KEM or its modular lattices approach, ensuring that an alternative method for PQC protection can still be deployed to protect organizations from SNDL attacks. NIST is currently drafting the HQC standard.

Besides migrating existing applications, we should also prevent applications from being designed with quantum vulnerable cryptographic algorithms in mind. This can be done by adding friction to any new use case trying to use quantum vulnerable algorithms.

The deployment of PQC-based solutions generally follows one of two paths: replacement (swapping classical for PQC) or hybrid (combining both).

While replacement reduces bandwidth and complexity, it relies entirely on newer PQC standards that are still maturing. The recent cryptanalysis (and invalidation) of algorithms like SIKE (final-round candidate running in the NIST PQC standardization process) underscores the importance of relying on thoroughly time-vetted, standardized algorithms during this period of transition to maintain robust security.

To mitigate this, we prioritize the hybrid approach by layering a PQC primitive on top of an established classical one, designed so that the combined system should remain at least as secure as the current standard. An adversary would need to break both layers to compromise the system, providing a critical safety net.

Sharing our strategy and learnings doesn’t mean the process is complete. Hardening Meta’s systems — and any other organization’s systems — to post‑quantum cryptography takes years of phased work across protocols, products, and infrastructure as standards and implementations and threats mature. We’ll continue to expand coverage, extend protections, and share progress, and we’ll keep raising the bar to ensure we’re following the rigorous security practices consistent with evolving industry standards.

The information in this article is shared for informational purposes only and does not constitute professional, technical, or legal advice, nor does it constitute a guarantee of any particular security outcome. Organizations should conduct their own assessments and consult qualified professionals before making cryptographic implementation decisions.

This work reflects a broad, cross-company effort. We’re grateful to colleagues across Meta who are helping shape our post-quantum cryptography migration strategy and turn it into practice—through system design, implementation, deployment planning, measurement, and ongoing operations. In particular, we’d like to acknowledge the invaluable contributions and collaboration from teams across: Transport Security (Sheran Lin , Jolene Tan , Kyle Nekritz, Ameya Shendarkar), WhatsApp (Sebastian Messmer, Maayan Sagir Hever, Julian Maingot, Alex Kube, Ronak Patel), Facebook/Messenger (Emma Connor, Jasmine Henry), Infrastructure (Dong Wu, Grace Wu, (Seattle) Weiyuan Li, Yue Li, Shay Gueron Grunbaum, Xiaoyi Fei), Reality Labs (Marcus Hodges), Hardware (Hendrik Volkmer, Vijay Sai Krishnamoorthy) and the Payments team (Hootan Shadmehr, Hema Pamarty, Ryan DeSouza). We also thank Chris Wiltz and the many additional engineers, researchers, program managers, and reviewers — across Security, Product, and Policy — whose feedback improved both the technical clarity and the practical guidance in this post.

The post Post-Quantum Cryptography Migration at Meta: Framework, Lessons, and Takeaways appeared first on Engineering at Meta.

Continue reading on the original blog to support the author

Read full articleIt demonstrates how to implement privacy-preserving security features in end-to-end encrypted environments. Engineers can learn how to balance cryptographic privacy primitives like PIR and OPRF with the practical performance requirements of large-scale real-time messaging.

WhatsApp's migration demonstrates that Rust is production-ready for massive-scale, cross-platform applications. It proves memory-safe languages can replace legacy C++ to eliminate vulnerabilities while improving performance and maintainability.

This article details how Meta scaled a critical security feature, Key Transparency, to Messenger's massive user base. Engineers can learn about distributed system challenges, cryptographic key management, and infrastructure resilience for high-volume, security-sensitive applications.

This article details how Meta scaled invisible video watermarking, a critical technology for content provenance. It's vital for engineers tackling challenges like detecting AI-generated media and ensuring content authenticity at massive scale with operational efficiency.