Engineers can bypass the 'marathon of misery' of multi-year SASE deployments. By using programmable, identity-centric tools, teams can secure global infrastructure and AI workflows in weeks rather than years, reducing technical debt and improving performance.

For years, the cybersecurity industry has accepted a grim reality: migrating to a zero trust architecture is a marathon of misery. CIOs have been conditioned to expect multi-year deployment timelines, characterized by turning screws, manual configurations, and the relentless care and feeding of legacy SASE vendors.

But at Cloudflare, we believe that kind of complexity is a choice, not a requirement. Today, we are highlighting how our partners are proving that what used to take years now takes weeks. By leveraging Cloudflare One, our agile SASE platform, partners like TachTech and Adapture are showing that the path to safe AI and Zero Trust adoption is faster, more seamless, and more programmable than ever before.

The traditional migration path for legacy SASE products—specifically the deployment of Secure Web Gateway (SWG) and Zero Trust Network Access (ZTNA)—often stretches to 18 months for large organizations. For a CIO, that represents a year and a half of technical debt and persistent security gaps.

By contrast, partners like TachTech and Adapture are proving that this marathon of misery is not a technical necessity. By using a unified connectivity cloud, they have compressed these timelines from 18 months down to just six weeks.

Kyle Jerome Thompson, a solutions architect at TachTech with 30 years of experience, says Cloudflare One fundamentally changes this calculus. By replacing legacy tools with Cloudflare's robust telemetry and global network, TachTech has slashed deployment times for large organizations down to just four to six weeks.

"Cloudflare has taken the 'wizardry' out of zero trust,” says Thompson. “Unlike legacy solutions that require continual care and feeding, Cloudflare Access is lightweight and 'no-touch' after deployment. It commoditizes security in the same way you think about plumbing or electricity—it just works, it’s cost-effective, and it lets our customers get back to their real day jobs."

Legacy migrations typically fail when they are treated as a series of hardware replacements rather than a software transformation. Traditional vendors often require complex service chaining where traffic is passed from one inspection cluster to another. This creates a "trombone effect," adding latency and making troubleshooting nearly impossible.

When you decouple the security policy from the physical network, the migration speed changes. Our partners focus on three pillars to accelerate this transition:

Identity-first on-ramps: Instead of rebuilding network segments, they use existing identity provider (IdP) groups to define access.

Consolidated policy engines: By using a single pass for both SWG and ZTNA, administrators avoid the need to "sync" different products.

Cloud-native connectors: Using lightweight daemons like cloudflared allows for instant connectivity without opening inbound firewall ports.

The story is similar at Adapture, where they have a simple mission: improve IT performance and mitigate risk for clients. For one client, what started as a small contractor-focused footprint quickly exploded from 600 seats to a 5,000-seat deployment of Cloudflare Access.

This rapid elasticity proved that Cloudflare’s easy-to-use SASE platform bypasses legacy deployment hurdles—a transition Adapture characterized as “seamless.”

“Organizations can’t afford an implementation that stretches across months,” says Greg O’Connor, VP of Strategic Alliances at Adapture. “Cloudflare is creating a new standard when it comes to SASE implementation, bringing our clients to the cutting edge of SASE.”

In global infrastructure, unique environments and highly specialized workflows are the reality. A hallmark of the Cloudflare One architecture is that it is software-defined and extensible, allowing partners to unblock specific requirements without compromising the organization's overall security posture.

Cloudflare One is a truly composable and programmable platform, allowing proactive partners to move away from static GUIs and build without bounds. For example, when Thompson at TachTech encountered a developer team utilizing Arch Linux, they didn't have to sacrifice visibility or create a security exception. They were able to extend the Cloudflare One Client to support the specific requirements of that environment.

By extracting the binaries from the Ubuntu .deb package and creating a custom PKGBUILD, the team ensured the client could run as a native service on Arch. This ensured the organization maintained consistent device posture checks—verifying disk encryption and firewall status—even on non-standard developer workstations.

As organizations move toward agentic workflows, O’Connor notes “both threats and security measures are moving faster than ever.” Across the industry, the role of the SWG is evolving. It is no longer just about blocking malicious URLs; it’s about controlling the flow of data into Large Language Models (LLMs). Cloudflare One serves as the fast path to safe AI adoption by integrating security directly into the user's path to the Internet.

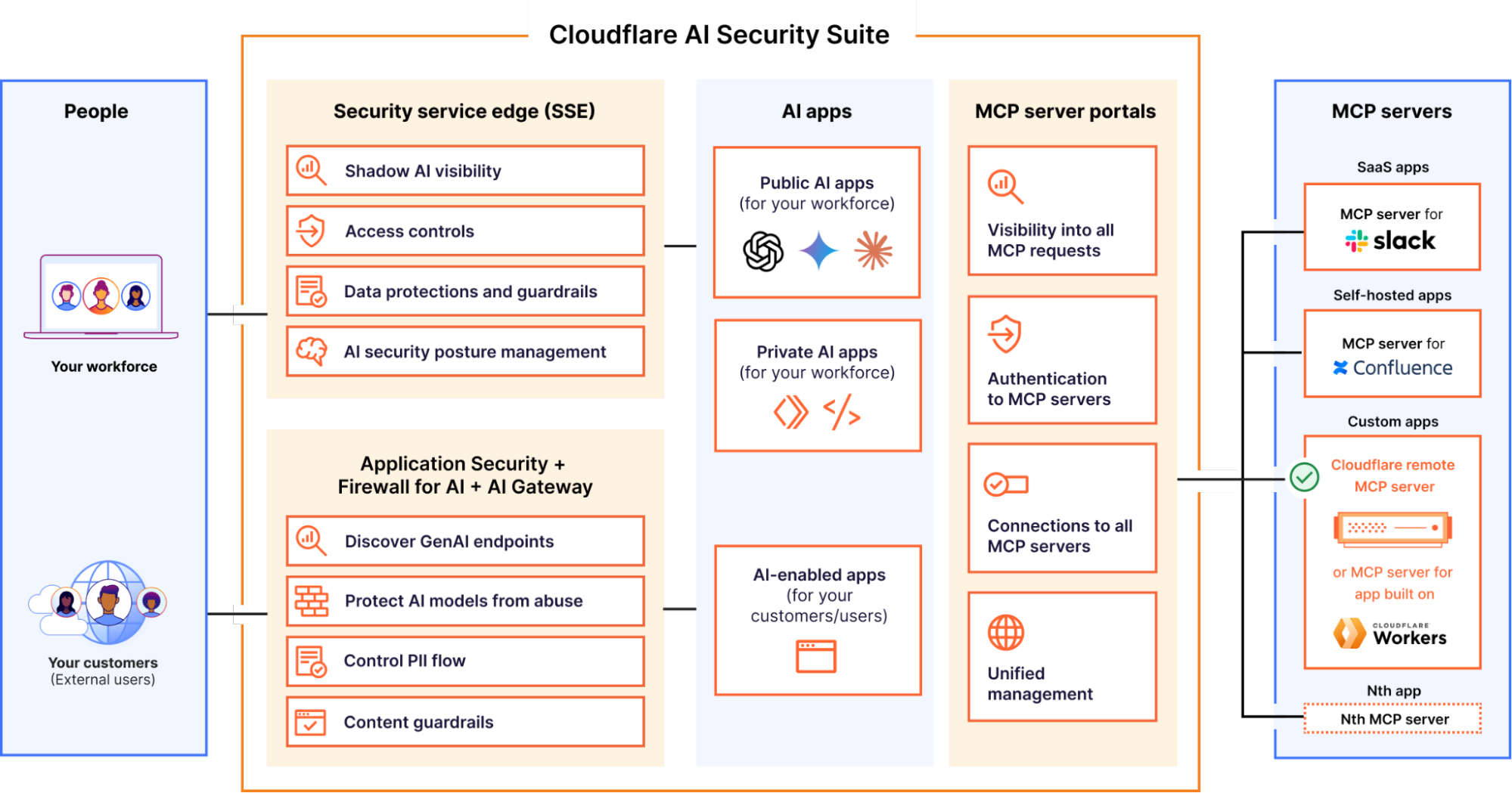

Our goal is to set our partners up for success across a wide variety of customer challenges. Rather than managing disparate security tools, our partners deploy the Cloudflare AI Security Suite to provide a unified defense across the entire AI lifecycle. This native set of controls allows organizations to:

Secure your workforce as they use AI. For employees leveraging public LLMs, Cloudflare One provides a "safe harbor" that balances innovation with strict data governance.

Shadow AI visibility: Instantly discover and categorize which unapproved third-party AI tools are being used across your network via the Shadow AI dashboard.

AI confidence scores: Move beyond "block-all" policies by grading models on their compliance posture (SOC 2, ISO 42001) and data handling reliability before sanctioning them.

DLP AI prompt protection: Secure your intellectual property by using AI-powered Cloudflare Data Loss Prevention (DLP) to block sensitive source code, PII, or financials from being submitted into public training sets.

Secure your AI-powered apps. For the AI-powered applications your team builds and hosts, we provide a dedicated Firewall for AI to protect the integrity of your models.

LLM discovery: Automatically discover and label every LLM endpoint exposed to the internet, providing immediate visibility into your AI attack surface.

Request validation: Prevent "AI-jacking" by blocking prompt injections and malicious inputs designed to coerce your model into producing wrong or embarrassing outputs.

Response scrubbing: Ensure your model doesn't accidentally "hallucinate" sensitive internal data back to a customer by scrubbing the response for PII or toxic topics before it crosses the wire.

Secure agentic AI. As we move toward autonomous agents, MCP server portals provide a central registry and least-privilege control over how AI interacts with corporate resources like Slack or Confluence. This prevents the autonomous horror stories of data heists and rogue actions by returning visibility and control to IT admins.

The Cloudflare AI Security Suite acts as a secure intermediary between users and AI ecosystems, providing visibility, data protection, and governance for public, private, and agentic AI applications.

If you are a CIO still tethered to a multi-year migration roadmap, you are operating at a competitive disadvantage. Cloudflare One integrates your network and security into a single fabric that is fast, safe, and infinitely more programmable than the legacy solution in your current stack.

Don't let the fear of a difficult migration keep you trapped in a legacy mindset. Our partners are proving every day that the move to SASE can be fast, effective, and—dare we say—easy.

Connect with a Cloudflare One expert to start mapping your migration.

Continue reading on the original blog to support the author

Read full articleThis milestone demonstrates how massive-scale infrastructure can handle record-breaking DDoS attacks (31.4 Tbps) autonomously. It showcases the power of pushing security and compute to the edge using eBPF and XDP, allowing for high-performance, distributed application hosting.

Engineers can now extend Cloudflare's DDoS protection with custom eBPF logic. This is crucial for proprietary UDP-based applications like gaming or VoIP, where generic rate limiting causes collateral damage. It provides granular, stateful control over traffic filtering at the network edge.

This shift solves the performance penalty of SASE proxies by moving from L3 tunneling to direct L4 proxying via QUIC. It doubles throughput and lowers latency, making Zero Trust security transparent to users during high-bandwidth tasks or when coexisting with legacy VPNs.

Cloudflare's programmable SASE allows engineers to build context-aware security policies using code. By executing logic at the edge, teams can integrate external data into access decisions in real-time, reducing latency and complexity compared to traditional webhook-based automation.